Red Team

Introduction to the NVIDIA AI Red Team

June 14, 2023

June 14, 2023

Authors: Will Pearce and Joseph Lucas

Machine learning holds the promise of improving our world, and in many ways it already has. However, research and real-world experience continue to demonstrate that this technology carries risks. Capabilities once confined to science fiction and academia are increasingly becoming available to the public. The responsible use and development of AI requires categorizing, assessing, and mitigating enumerated risks wherever feasible. This is true from a purely AI perspective, but equally so from a standard information security standpoint.

Before standards are in place and mature testing gains a foothold, organizations are turning to red teams to explore and enumerate the immediate risks posed by AI. This post introduces the philosophy of the NVIDIA AI Red Team and a general framework for ML systems.

Assessment Foundations

Our AI Red Team is a cross-functional team composed of offensive security professionals and data scientists. We leverage our combined skill sets to assess our ML systems, identifying and helping mitigate any risks from an information security perspective.

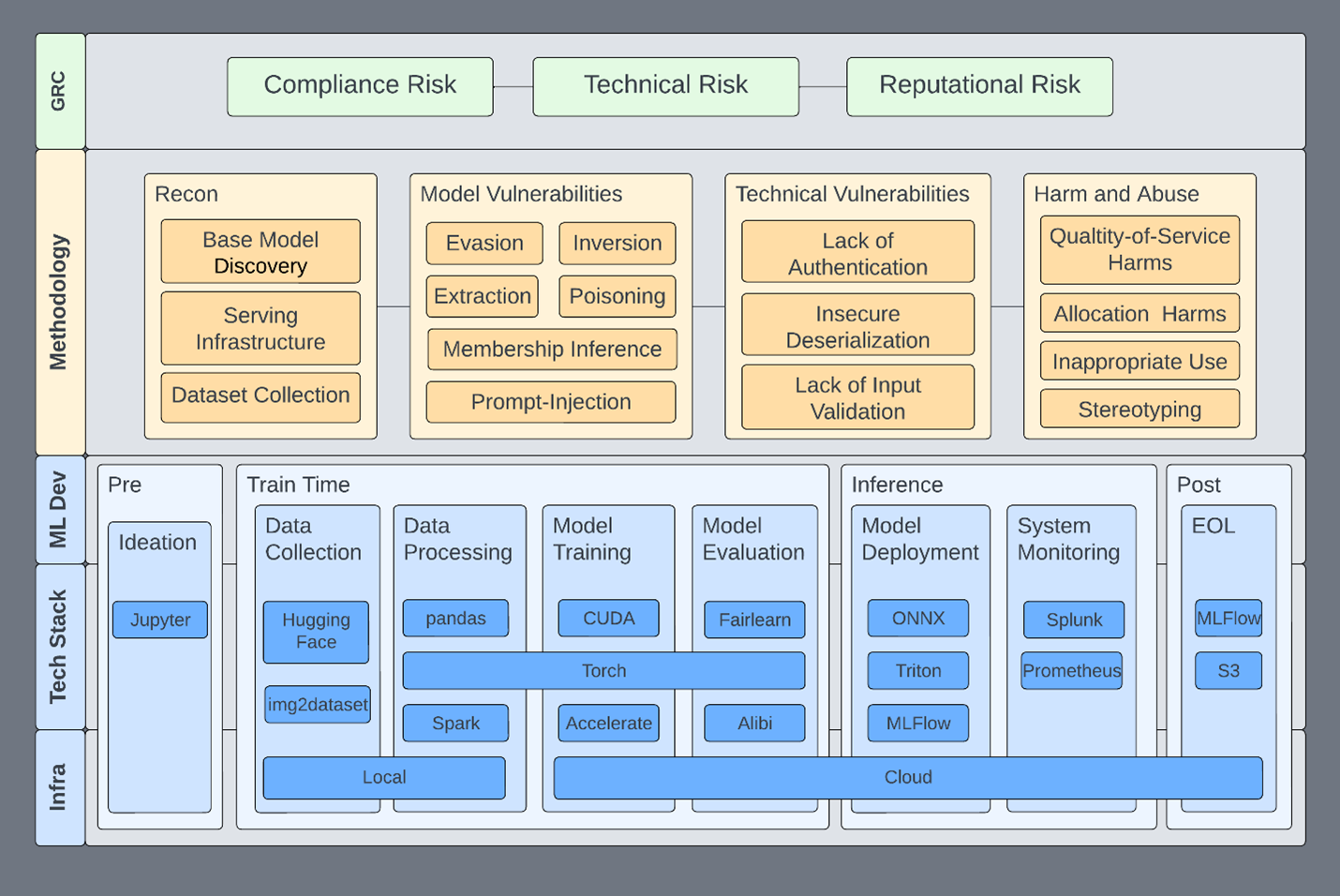

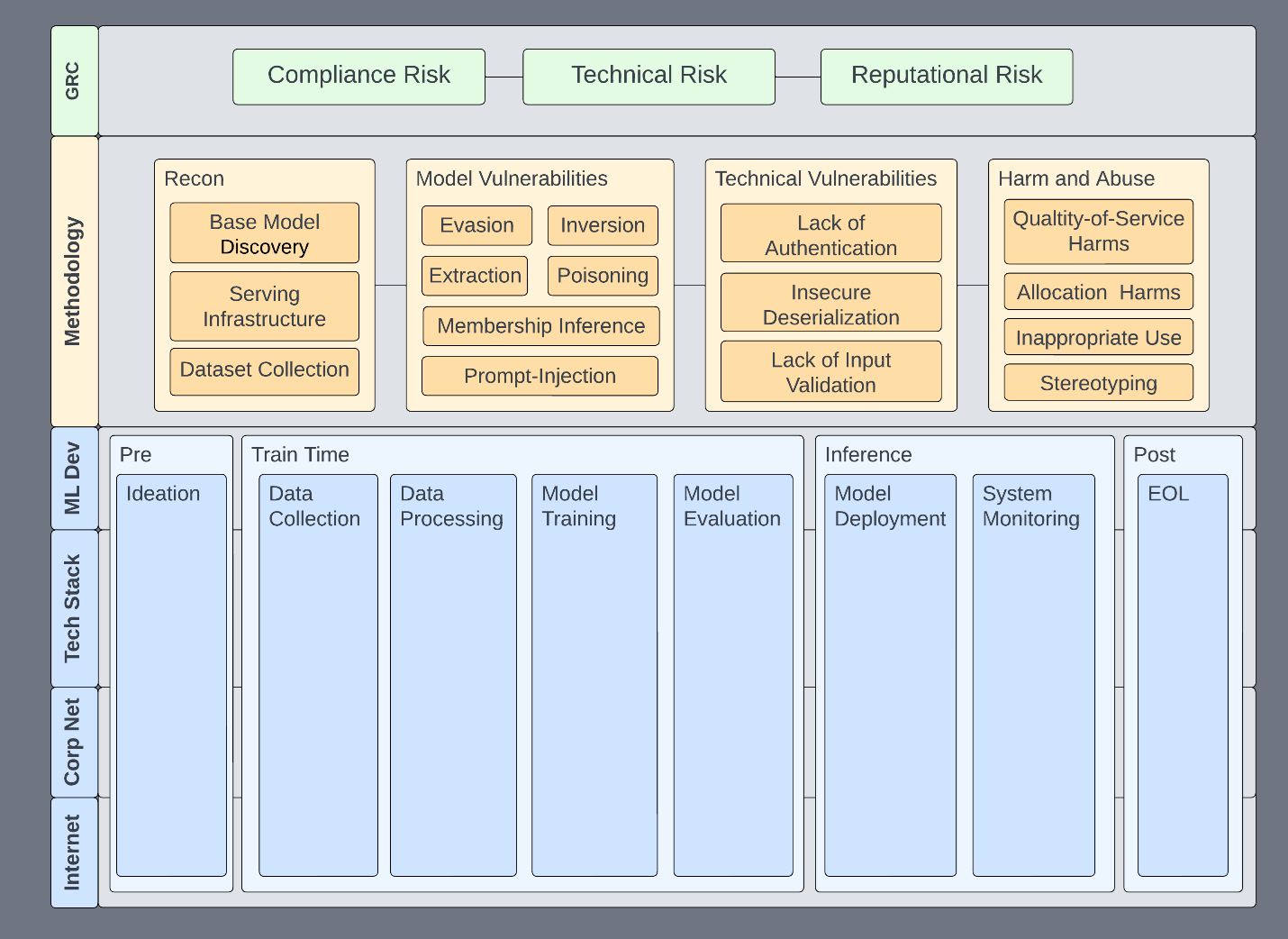

Information security offers many useful paradigms, tools, and network access that enable us to accelerate responsible use across all domains. This framework serves as our foundation and aligns assessment efforts with organizational standards. We use it to guide assessments (Figure 1) toward the following objectives:

- Risks that our organization cares about and wants to eliminate are addressed.

- Required assessment activities and the various tactics, techniques, and procedures (TTPs) are clearly defined. TTPs can be added without altering existing structures.

- Systems and technologies within our assessment scope are clearly defined. This helps us stay focused on ML systems without drifting into other areas.

- All work resides within a single framework that stakeholders can reference to immediately gain a high-level understanding of ML security.

This helps us set expectations for assessments, the systems we may impact, and the risks we address. The framework is not specific to red teaming, but some of its properties are foundational to a functional ML security program, of which red teaming is just one component.

Figure 1. AI Red Team Assessment Framework

Figure 1. AI Red Team Assessment Framework

The specific techniques and capabilities are not necessarily what matters most. What matters is that whether you are red teaming, vulnerability scanning, or conducting any type of assessment on ML systems, everything has a place to go.

The framework enables us to address specific issues in specific parts of the ML pipeline, infrastructure, or technology. It becomes a place to communicate the risk of issues to affected systems — both upstream and downstream — and to inform both policy and technical decisions.

Any given subsection can be isolated, expanded, and described in the context of the overall system. Here are some examples:

- Evasion is expanded to include specific algorithms or TTPs relevant to assessing specific model types. The red team can pinpoint exactly which infrastructure components are affected.

- Technical vulnerabilities can affect any level of infrastructure or only specific applications. They can be addressed according to their function and corresponding risk rating.

- Harm and abuse scenarios, which may be unfamiliar to many information security practitioners, are not merely included but integrated. In this way, we incentivize technical teams to consider harm and abuse scenarios when assessing ML systems. Alternatively, they can provide tools and expertise to ethics teams.

- Requirements passed down can be integrated more quickly, both legacy and new.

Such a framework offers many benefits. Consider how a disclosure process can benefit from this calm, structured perspective. The core building blocks are Governance, Risk, and Compliance (GRC) and Machine Learning (ML) Development.

Governance, Risk, and Compliance

As with many organizations, GRC represents the highest level of information security efforts, ensuring that business security requirements are enumerated, communicated, and enforced. As an AI red team operating under the information security umbrella, the following are the high-level risks we focus on:

- Technical Risk: An ML system or process is compromised due to technical vulnerabilities or weaknesses.

- Reputational Risk: Model performance or behavior reflects poorly on the organization. In this new paradigm, this may include releasing a model with broad societal impact.

- Compliance Risk: An ML system is non-compliant, resulting in fines or reduced market competitiveness, similar to PCI or GDPR.

These high-level risk categories exist across all information systems, including ML systems. Think of these categories as separate colored lenses on a light. Using each colored lens reveals the risks of the underlying system from a different angle, and sometimes risks may overlap. For example, a technical vulnerability leading to a breach could cause reputational damage. Depending on where the breach occurs, compliance may also require breach notifications, fines, and more.

Even if ML had no vulnerabilities of its own, it is still developed, stored, and deployed on infrastructure governed by the standards established through GRC efforts. All assets within an organization must comply with GRC standards. If they do not, ideally it is only because management has raised and approved an exception.

ML Development

At the bottom of the stack is the ML development lifecycle, as it represents the activity that GRC seeks visibility into. We generally view an ML system as any system involving ML, including the processes and systems used to build models. Components of an ML system may include web servers hosting models for inference, data lakes storing training data, or systems that use model outputs to make decisions.

The development pipeline spans multiple systems, sometimes even uncoordinated ones. Each stage of the lifecycle is functionally unique and depends on the previous stage. Because of this, ML systems tend to be tightly integrated, and a compromise in any part of the pipeline can affect other upstream or downstream development stages.

More detailed MLOps pipelines exist, but the canonical example is sufficient to successfully group supporting tools and services with their lifecycle stages (Table 1).

| Phase | Description | Model State |

|---|---|---|

| Ideation | Discussions, meetings, and intent around requirements. | Pre-development |

| Data Collection | Models need data for training. Data is typically collected from public and private sources with a specific model in mind. This is an ongoing process, as data continues to be collected from these sources. | Training |

| Data Processing | Collected data is processed in any number of ways before being fed into algorithms for training and inference. | Training |

| Model Training | The processed data is then fed through algorithms to train the model. | Training |

| Model Evaluation | After training, the model is validated to ensure accuracy, robustness, interpretability, or any number of other metrics. | Training |

| Model Deployment | The trained model is embedded into a system for production use. ML is deployed in many ways: inside autonomous vehicles, on web APIs, or in client applications. | Inference |

| System Monitoring | After model deployment, the system is monitored. This includes aspects of the system that may not be directly related to the ML model. | Inference |

| End of Life | Data migration, changing business needs, and innovation all require properly decommissioning systems. | Post-development |

Table 1. MLOps Pipeline with Accompanying Red Team Tools and Services

This high-level structure enables risks to be placed in the context of the overall ML system and provides some natural security boundaries. For example, implementing privilege tiers between phases may prevent incidents from cascading across an entire pipeline or multiple pipelines. Whether compromised or not, the purpose of the pipeline is to deploy models for use.

Methodology and Use Cases

The methodology attempts to cover all major concerns related to ML systems. Within our framework, any given phase can be handed off to a skilled team:

- Existing offensive security teams may have the capability to perform reconnaissance and explore technical vulnerabilities.

- Responsible AI teams have the capability to address harm and abuse scenarios.

- ML researchers have the capability to handle model vulnerabilities.

Our AI Red Team prefers to consolidate these skills within the same or adjacent teams. The gains in learning and efficiency are undeniable: traditional red teamers can contribute CVEs to data scientists through academic papers.

| Assessment Phase | Description |

|---|---|

| Reconnaissance | This phase covers techniques described in MITRE ATT&CK or MITRE ATLAS |

| Technical Vulnerabilities | All the traditional weaknesses you know and love. |

| Model Vulnerabilities | These vulnerabilities typically originate from the research space and cover the following: extraction, evasion, inversion, membership inference, and poisoning. |

| Harm and Abuse | Models are often trained and distributed in ways that could be misused for malicious or otherwise harmful tasks. Models may also be biased, whether intentionally or unintentionally. Alternatively, they may not accurately reflect the environments in which they are deployed. |

Table 2. Methodology Assessment Phases

Regardless of which team performs which assessment activity, it is conducted within the same framework and informs the broader assessment effort. Here are some specific use cases:

- Addressing new prompt injection techniques

- Examining and defining security boundaries

- Using privilege tiers

- Conducting tabletop exercises

Addressing New Prompt Injection Techniques

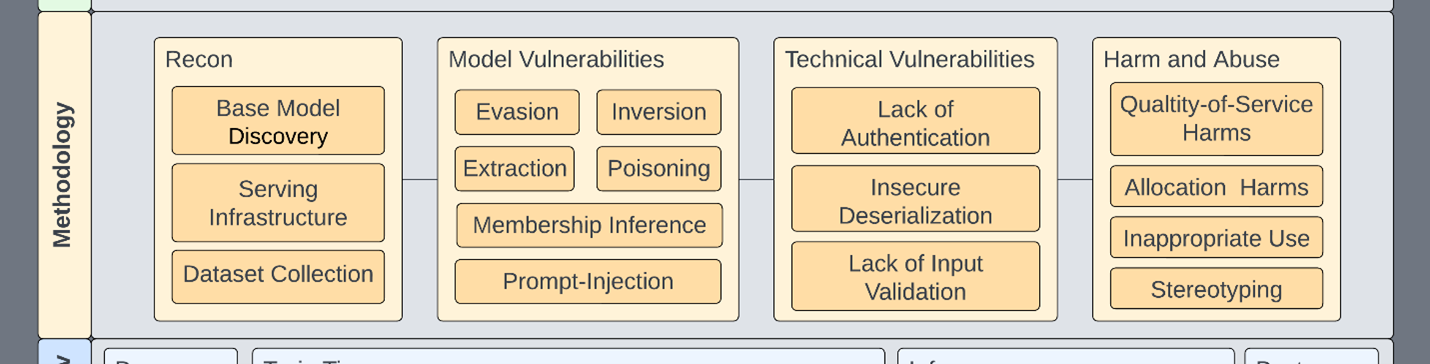

In this scenario, the output of a large language model (LLM) is carelessly placed into a Python exec or eval statement. You can already see how the combined view helps address problems across multiple dimensions, since input validation serves as one layer of defense against prompt injection.

Figure 2: A new prompt injection vulnerability may span both the Model and Technical columns

Figure 2: A new prompt injection vulnerability may span both the Model and Technical columns

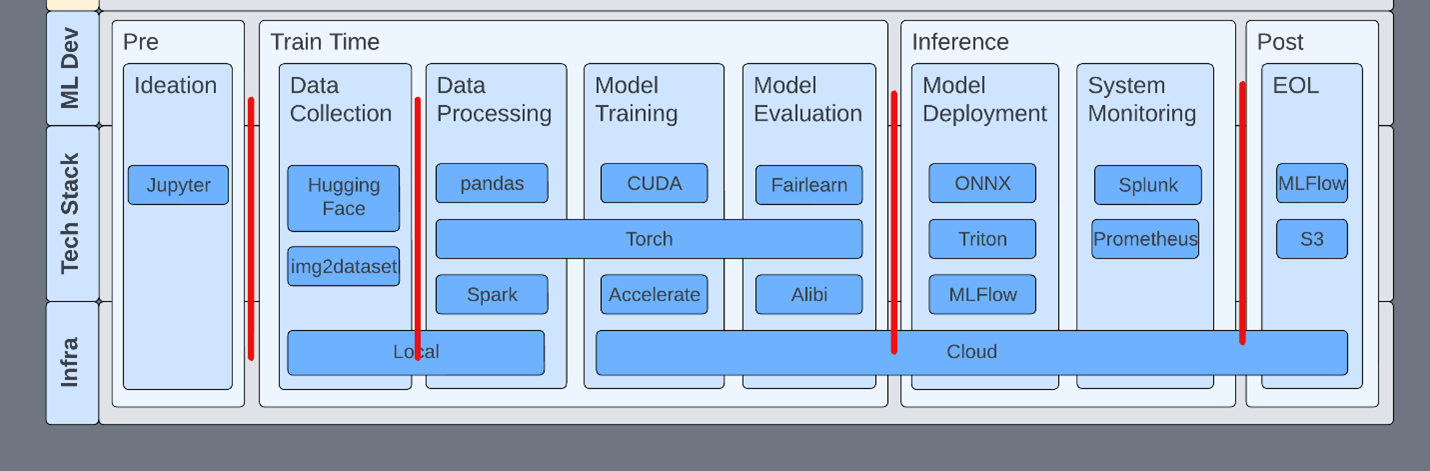

Examining and Defining Security Boundaries

Separating each phase with security controls reduces the attack surface and increases visibility into ML systems. An example control might be blocking pickle files (yes, that torch file contains pickle) outside of development environments, requiring production models to be converted to a format less prone to code execution, such as ONNX. This allows developers to continue using pickle during development but not in sensitive environments.

While avoiding pickle entirely would be ideal, security is often about compromise. Where completely avoiding a problem is not feasible, organizations should seek to add mitigating controls.

Figure 3. Security Between Development Phases

Figure 3. Security Between Development Phases

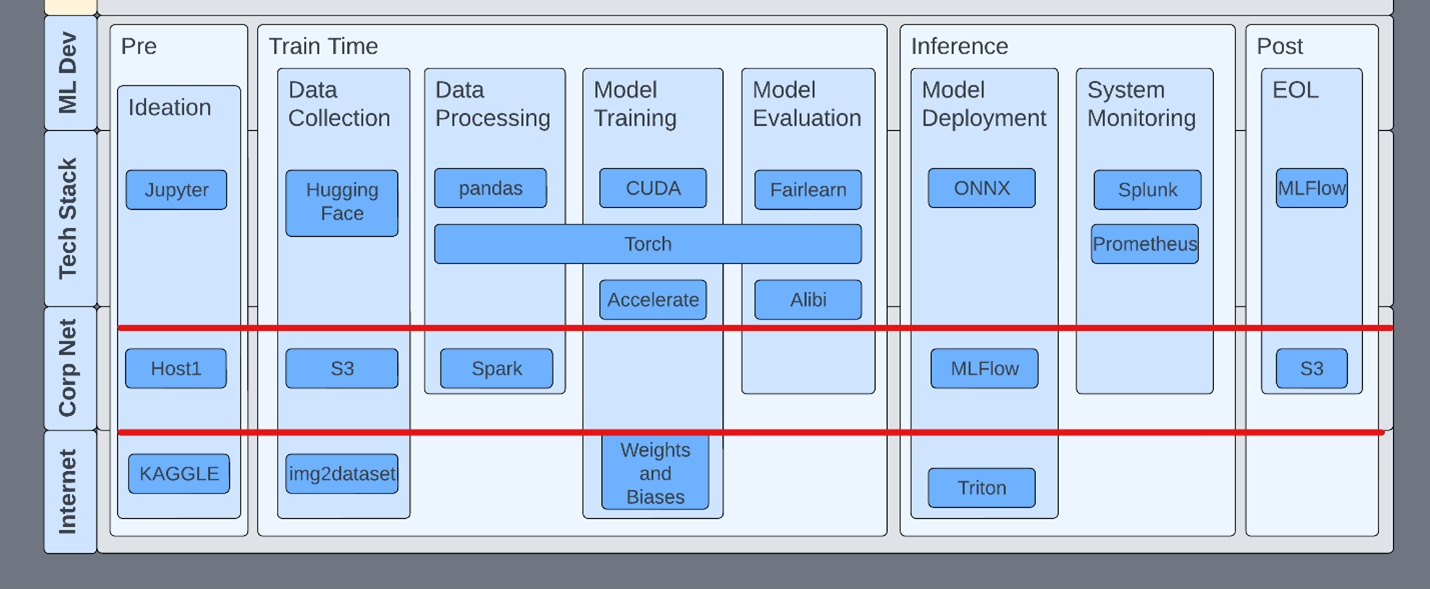

Using Privilege Tiers

Within the development pipeline, it is important to understand the tools used at each stage of the lifecycle and their properties. For example, MLFlow has no authentication by default. Launching an MLFlow server, knowingly or unknowingly, opens that host to exploitation via deserialization.

In another example, Jupyter servers are often started with parameters that disable authentication, and TensorBoard has no authentication. This does not mean TensorBoard should have authentication. Teams should be aware of this fact and ensure that appropriate network security rules are in place.

Consider the scope of all technologies within the development pipeline. This includes simple things like two-factor authentication on ML services such as HuggingFace.

Figure 4. Privilege Tiers Between Infrastructure Layers

Figure 4. Privilege Tiers Between Infrastructure Layers

Conducting Tabletop Exercises

Consider stripping the ML development process down to just your technologies, where they reside, and the TTPs that will be applied. Move up and down the stack. Here are some scenarios to think through quickly:

- A deployed Flask server has debug mode enabled and is exposed to the Internet. It hosts a model that provides inference on HIPAA-protected data.

- PII was downloaded as part of a dataset and has been used to train several models. Now a customer is asking about it.

- A public bucket containing several ML artifacts, including production models, is open to the public. It was accessed improperly and files were modified.

- Despite the model being accurate and up to date, some individuals can consistently bypass content filters.

- A model underperforms expectations in certain geographic regions.

- Someone is scanning the internal network from an inference server used to host an LLM.

- A system monitoring service detects that someone is submitting well-known datasets against the inference service.

These scenarios may be somewhat contrived, but take the time to place the various technologies in the right buckets and then work through the methodology step by step.

- Does this sound like a problem caused by a technical vulnerability?

- Does it affect any ML processes?

- Who is responsible for these models? Would they know if changes had been made?

These are questions that must be answered. Some of these scenarios may seem to fall immediately into one part of the methodology. However, upon closer inspection, you will find that most span multiple domains.

Figure 5. Threat Model Worksheet

Figure 5. Threat Model Worksheet

Conclusion

This framework has provided you with several familiar paradigms that your organization can begin to strategize around. Through a principled approach, you can lay the groundwork for continuous security improvement — from product design to production deployment — reaching toward standards and maturity. We invite you to adopt our approach and adapt it to your own purposes.

Our approach does not prescribe behaviors or processes. Rather, it is designed to organize them. Your organization may already have mature processes for discovering, managing, and mitigating risks associated with traditional applications. We hope this framework and methodology will similarly prepare you to identify and mitigate the new risks introduced by ML components deployed within your organization.

If you have any questions, please leave a comment below or reach out to threatops@nvidia.com.