Propositional Logic

Propositional Logic, also known as Sentential Logic, is one of the most fundamental branches of logic and mathematics. It studies propositions and how simple propositions can be combined into complex ones using logical connectives, and analyzes their truth values.

Fundamental Concepts

Propositions, Syntax, and Semantics

Proposition

A proposition is a declarative statement that can be judged as either true or false. In propositional logic, we typically use uppercase letters (such as \(P, Q, R\)) to represent a proposition.

- Examples of propositions:

- "Beijing is the capital of China." (True proposition)

- "1 + 1 = 3." (False proposition)

- "It is raining today." (May be true or false, but necessarily one or the other)

- Non-examples:

- "How are you?" (A question — has no truth value)

- "Run!" (A command — has no truth value)

- "x + 1 = 2" (Unless the value of \(x\) is specified, its truth cannot be determined — this belongs to the domain of predicate logic)

Syntax

Syntax specifies which sentence structures are legal (i.e., "well-formed"). It is concerned only with the rules for arranging symbols and does not concern itself with meaning.

For example, in algebra:

- \(x + y = 4\) is legal (conforms to syntactic rules).

- \(x4y+=\) is illegal (violates syntactic rules — essentially gibberish).

In propositional logic, the syntax uses symbols such as \(P_1, P_2, \dots\) to represent atomic propositions (e.g., "Today is Monday"). If \(S\) is a sentence, then \(\neg S\) is also a sentence (\(\neg\) is read as "not").

Semantics

Semantics defines the truth value of a sentence — that is, it assigns meaning to symbols and determines whether a sentence is "true" or "false." The truth of a sentence depends on the "world" or "context" we are in (i.e., the specific values assigned to variables).

Example: Sentence \(s: x + y = 4\)

- In the world where \(x=3, y=1\), it is true.

- In the world where \(x=1, y=1\), it is false.

In propositional logic, \(\neg S\) is true if and only if (iff) \(S\) is false:

- When \(P\) is T, \(\neg P\) is F.

- When \(P\) is F, \(\neg P\) is T.

Logical Connectives

Propositional logic does not concern itself with the internal details of propositions (such as "who" did "what"); it only cares about how propositions are connected. The most commonly used logical connectives include:

-

Negation (NOT)

- Symbol: \(\neg\) or \(\sim\)

- Meaning: If \(P\) is true, \(\neg P\) is false.

- Example: \(P\) = "It is raining," \(\neg P\) = "It is not raining."

- Conjunction (AND)

- Symbol: \(\wedge\)

- Meaning: \(P \wedge Q\) is true only when both \(P\) and \(Q\) are true.

- Example: "I am eating and I am watching TV."

- Disjunction (OR)

- Symbol: \(\vee\)

- Meaning: \(P \vee Q\) is true as long as at least one of \(P\) or \(Q\) is true (or both).

- Example: "Today is Saturday or today is Sunday."

- Implication (IF...THEN)

- Symbol: \(\rightarrow\) or \(\Rightarrow\)

- Meaning: \(S_1 \Rightarrow S_2\) is true if and only if \(S_1\) is false or \(S_2\) is true. An implication is false in exactly one case: "the premise is true, but the conclusion is false" (T \(\Rightarrow\) F).

- The left side of the arrow is called the antecedent (the condition); the right side is called the consequent (the conclusion). As long as the antecedent is False, the entire implication cannot be falsified and is judged True; only when the antecedent is True and the consequent is False is the implication False.

- Note: When converting to CNF, \(A \rightarrow B\) can be equivalently rewritten as \(\neg A \vee B\).

- Biconditional (IF AND ONLY IF)

- Symbol: \(\leftrightarrow\) or \(\Leftrightarrow\)

- Meaning: \(P \leftrightarrow Q\) is true if and only if \(P\) and \(Q\) have the same truth value. This is equivalent to \((P \Rightarrow Q) \wedge (Q \Rightarrow P)\) both holding.

Quick Reference for Common Symbols

| Symbol | Name | Reading |

|---|---|---|

| \(\neg\) | Negation | Not |

| \(\wedge\) | Conjunction | And |

| \(\vee\) | Disjunction | Or |

| \(\rightarrow\) | Implication | Implies |

| \(\leftrightarrow\) | Biconditional | If and only if |

Truth Tables and Formula Classification

A truth table lists the truth or falsity of a proposition under all possible scenarios.

For example, the truth table for \(P \wedge Q\) (P and Q):

| P | Q | P ∧ Q |

|---|---|---|

| T | T | T |

| T | F | F |

| F | T | F |

| F | F | F |

Based on the results of a truth table, formulas are classified into three categories:

- Valid (Tautology): True under all models (all entries are T). Example: \(A \vee \neg A\) (the law of excluded middle — regardless of whether A is true or false, the result is always true).

- Satisfiable: True under some models and false under others (a mix of T and F). Example: \(A \wedge B\) (holds when both A and B are true).

- Unsatisfiable (Contradiction): False under all models (all entries are F). Example: \(A \wedge \neg A\) (A cannot be both true and false simultaneously — no model can make it hold).

Logical Equivalence and Simplification Rules

Logical Equivalence

If two logical formulas yield identical results under all possible truth assignments, they are logically equivalent, denoted by \(\equiv\) or \(\iff\). We can use equivalence relations to manipulate and simplify complex logical formulas.

Core Simplification Rules (Essential Tools for Converting to CNF)

In practice, the following rules are used most frequently:

1. Implication Elimination

Significance: This is the first step in converting a logical formula to CNF — it removes all arrows, leaving only \(\neg\), \(\vee\), and \(\wedge\).

2. De Morgan's Laws

When negating a compound proposition, negate each component and swap the conjunction and disjunction operators. This is the core tool for handling negation transformations between "or" and "and."

3. Distributive Laws

Similar to the distributive law of multiplication in arithmetic, this is a key step in restructuring formulas and converting them to CNF.

Complete List of Logical Identities

The following are all commonly used logical equivalences in propositional logic:

- Commutativity: \(p \vee q \equiv q \vee p\), \(p \wedge q \equiv q \wedge p\) The order of operands does not affect the result.

- Associativity: \((p \vee q) \vee r \equiv p \vee (q \vee r)\), \((p \wedge q) \wedge r \equiv p \wedge (q \wedge r)\) When chaining multiple identical operators, the grouping does not affect the result.

- Distributivity: \(p \vee (q \wedge r) \equiv (p \vee q) \wedge (p \vee r)\), \(p \wedge (q \vee r) \equiv (p \wedge q) \vee (p \wedge r)\)

- Idempotence: \(p \vee p \equiv p\), \(p \wedge p \equiv p\) A proposition disjoined or conjoined with itself remains unchanged.

- De Morgan's Laws: \(\neg(p \vee q) \equiv \neg p \wedge \neg q\), \(\neg(p \wedge q) \equiv \neg p \vee \neg q\)

- Excluded Middle: \(p \vee \neg p \equiv T\), \(p \wedge \neg p \equiv F\) A proposition and its negation: at least one must be true (tautology), and they cannot both be true (contradiction).

- Identity: \(p \vee F \equiv p\), \(p \wedge T \equiv p\) A proposition disjoined with F, or conjoined with T, remains unchanged.

- Domination: \(p \vee T \equiv T\), \(p \wedge F \equiv F\)

- Contrapositive: \(p \Rightarrow q \equiv \neg q \Rightarrow \neg p\) A proposition and its contrapositive are logically equivalent.

- Implication as Disjunction: \(p \Rightarrow q \equiv \neg p \vee q\)

- Negation of Implication: \(\neg(p \Rightarrow q) \equiv p \wedge \neg q\) The negation of an implication is equivalent to the antecedent being true and the consequent being false.

- Double Negation: \(\neg(\neg p) \equiv p\)

Knowledge-Based Agents

A Knowledge-Based Agent (KB agent) is an AI system that makes decisions through logical reasoning. Its "brain" is a Knowledge Base (KB), composed of many sentences that represent assertions or known facts about the world.

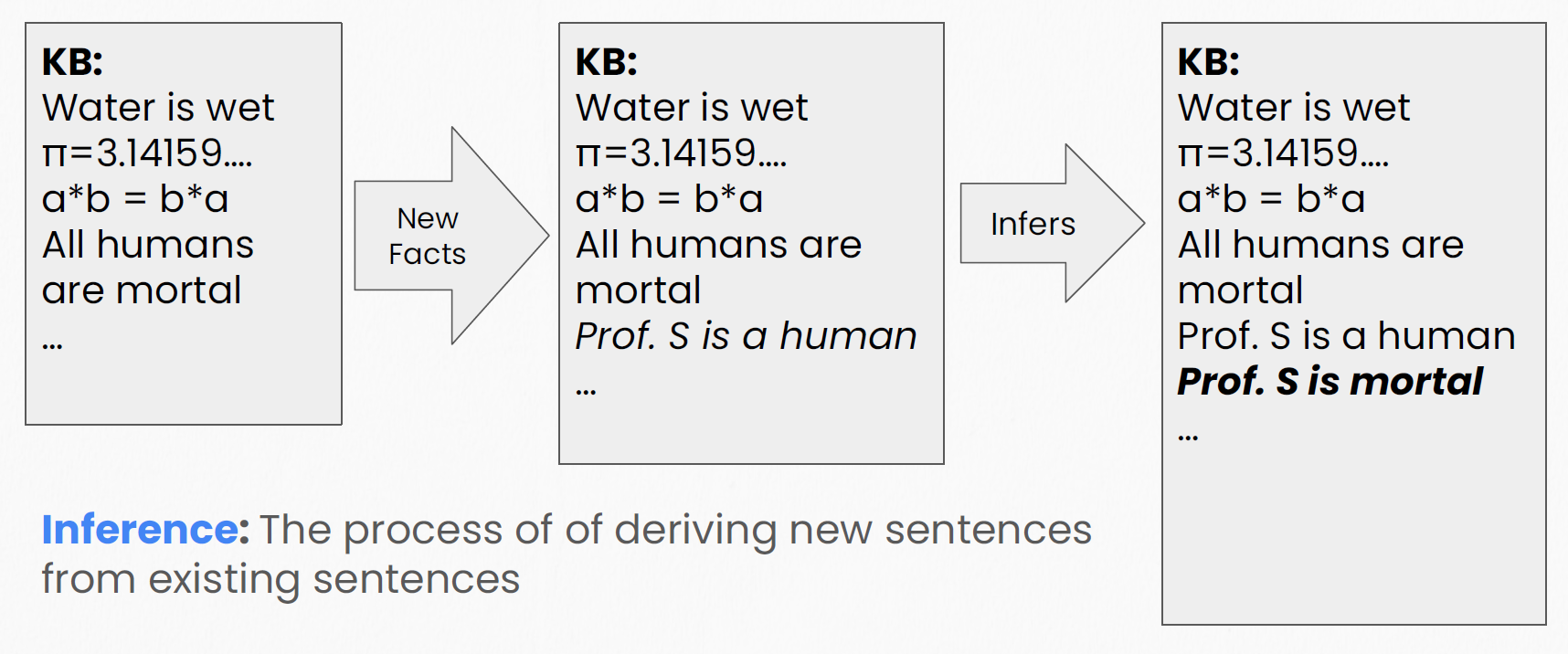

Inference: From the Known to the Unknown

Inference is the process of deriving new sentences from existing ones.

Classic Syllogism Example:

- Known rule (KB): "All humans are mortal."

- New fact: "Prof. S is a human."

- Inference process: The logic engine combines the two pieces of information.

- Conclusion: "Prof. S is mortal" — a conclusion not explicitly stated in the KB.

Models and Entailment

Model

A model is a specific "possible world" or "environment." In this environment, all variables have definite values, so the truth of every sentence can be determined. If sentence \(s\) is true in model \(m\), we say \(m\) satisfies \(s\).

Example: Sentence \(s: x + y = 4\) (over the natural numbers)

- Model \(\{x=3, y=1\}\): \(3+1=4\), satisfies \(s\).

- Model \(\{x=1, y=1\}\): \(1+1 \neq 4\), does not satisfy \(s\).

The notation \(M(s)\) denotes the set of all models that satisfy sentence \(s\). For \(x+y=4\), \(M(s)\) includes \(\{x=0, y=4\}\), \(\{x=1, y=3\}\), \(\{x=2, y=2\}\), and so on.

Entailment (\(\vDash\))

- \(KB \vDash \alpha\) is read as "KB entails \(\alpha\)," meaning "\(\alpha\) is a logical consequence of KB."

- Intuition: If you know "all humans are mortal" and "Prof. S is a human," then it logically follows that "Prof. S is mortal."

Two equivalent definitions of entailment:

-

Truth-based definition:

$$ KB \vDash \alpha \iff \alpha \text{ is true in every situation in which } KB \text{ is true} $$

In every "world" where the knowledge base \(KB\) is true, \(\alpha\) is also necessarily true. There is no counterexample where "\(KB\) is true but \(\alpha\) is false." 2. Model-based definition (set-theoretic perspective):

$$ KB \vDash \alpha \iff M(KB) \subseteq M(\alpha) $$

- \(M(KB)\) is the set of all models in which the knowledge base is true.

- \(M(\alpha)\) is the set of all models in which \(\alpha\) is true.

- If \(M(KB)\) is contained within \(M(\alpha)\), entailment holds.

- Informally: whenever you are in a world where KB holds, you are also in a world where \(\alpha\) holds.

Quality Criteria for Inference Algorithms

\(KB \vdash_P \alpha\) means: using inference procedure \(P\), sentence \(\alpha\) can be derived from knowledge base \(KB\).

- \(KB\) (Knowledge Base): All information, rules, and facts at hand.

- \(\alpha\) (Alpha): The target conclusion to be verified.

- \(P\) (Procedure): The specific inference algorithm. Different algorithms \(P\) may have different efficiencies for the same KB.

- \(\vdash\) (Turnstile): Read as "derives."

Two criteria for evaluating the quality of an inference procedure \(P\):

-

Soundness

- Definition: Any sentence \(\alpha\) derived by \(P\) is logically entailed by \(KB\) (derived conclusions are guaranteed to be true).

- Formula: \(KB \vdash_P \alpha \implies KB \models \alpha\)

- Informally: no false positives — everything derived is correct.

- Completeness

- Definition: \(P\) can derive all sentences entailed by \(KB\) (no true conclusion is missed).

- Formula: \(KB \models \alpha \implies KB \vdash_P \alpha\)

- Informally: no false negatives — everything that is true can be derived.

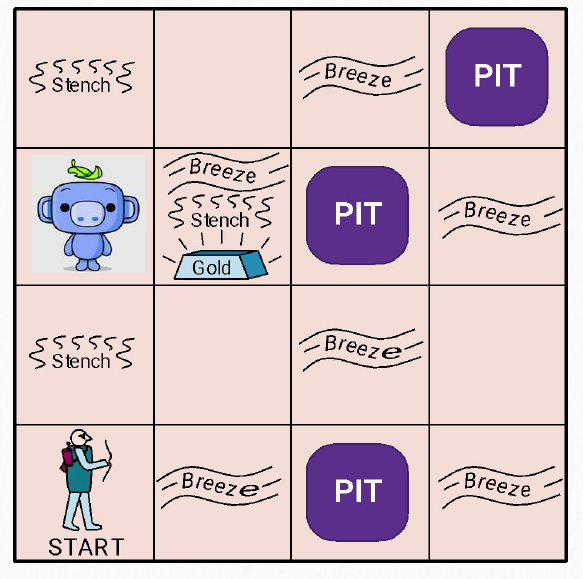

Classic Example: Wumpus World

The Wumpus World is a classic treasure-hunting game in AI, ideally suited for demonstrating the power of logical reasoning.

Game setup: An explorer (AI robot) is placed inside a \(4 \times 4\) dark cave.

- Objective: Find the gold and get out alive.

-

Dangers:

- Wumpus (monster): Encountering it means being eaten.

-

Pit (symbol \(P\)): Falling in means death. * Sensors (clues): The robot cannot see its surroundings and relies solely on "sensations":

-

Breeze (symbol \(B\)): If you stand in a cell adjacent to a pit, you feel a breeze.

- Stench: If you are next to the Wumpus, you smell a stench.

The robot must use the perceived clues to logically reason about which cells are safe and which are dangerous — this is precisely where propositional logic reasoning shines.

Naive Inference Algorithm: Model Checking

Model checking is the most straightforward inference method: enumerate all possible "worlds," identify which worlds make the KB true, and then check whether the conclusion also holds in those worlds.

How it works:

- Enumerate all possible truth assignments for the propositional symbols to build a truth table.

- Identify the rows where all sentences in the KB are true (the "valid models").

- Check whether the conclusion \(\alpha\) is also true in all those rows.

Derivation example (Wumpus World):

- Confirming no pit: If \(P_{1,2}\) is False in all valid models, we can conclude there is no pit at that location (\(\neg P_{1,2}\)).

- Narrowing down pit locations: If in all valid models either \(P_{2,2}\) or \(P_{3,1}\) is True, we conclude \(P_{2,2} \lor P_{3,1}\) (at least one of the two locations has a pit).

Performance analysis:

| In theory | Sound and Complete |

| In practice | Computationally extremely inefficient |

| Time complexity | \(O(2^n)\) (\(n\) = number of propositional symbols, exponential blowup) |

Computational cost examples:

- 10 symbols \(\approx 0.000001\) seconds

- 50 symbols \(\approx 13\) days

- 100 symbols \(\approx 40.2\) trillion years (\(40.2 \times 10^{12}\) years)

Conclusion: Naive model checking cannot handle complex problems. We need smarter inference algorithms — which leads us to Resolution.

Resolution

The resolution principle is an important method for automated theorem proving in propositional logic. Compared to model checking (exhaustive truth table enumeration), resolution is more efficient and serves as a general-purpose inference rule.

Resolution is based on proof by contradiction:

- To prove \(KB \models \alpha\), we do not need to directly show that \(\alpha\) is true.

- Instead, assume \(\alpha\) is false (add \(\neg \alpha\)) and check whether \(KB \wedge \neg \alpha\) leads to a contradiction (i.e., is unsatisfiable).

- If a contradiction is reached (the empty clause \(\emptyset\) is derived), the assumption fails, and \(\alpha\) must be true.

CNF (Conjunctive Normal Form)

The resolution algorithm requires all formulas to first be converted into Conjunctive Normal Form (CNF).

Structure of CNF:

- Literal: \(P\) or \(\neg P\) (a variable or its negation).

- Clause: A disjunction (OR) of literals, e.g., \((A \vee B \vee \neg C)\).

- CNF: A conjunction (AND) of clauses, e.g., \((A \vee B) \wedge (C \vee \neg D)\).

In short: CNF = AND of ORs (clauses connected by \(\wedge\), where each clause consists of literals connected by \(\vee\)).

For example: \((\neg P_1 \vee P_2) \wedge (P_3 \vee P_4 \vee \neg P_5) \wedge P_6\)

Why is CNF needed?

When a formula is in CNF, logical contradictions can be detected as quickly as possible. For example, given clauses \((A \lor B)\) and \((\neg A \lor C)\):

- \(A\) is either true or false.

- If \(A\) is true: the second clause requires \(C\) to be true.

- If \(A\) is false: the first clause requires \(B\) to be true.

- Therefore, we directly obtain \((B \lor C)\) without exhaustively enumerating truth table rows.

Any propositional logic formula can be converted to CNF.

How to Convert Any Formula to CNF (Three Steps)

Using \((p \to q) \lor (r \wedge s)\) as an example:

Step 1: Eliminate implications and biconditionals

CNF can only contain \(\neg\), \(\lor\), and \(\wedge\), so first use the implication equivalence to remove arrows:

- Rule: \(A \to B \equiv \neg A \lor B\)

- Operation: Replace \((p \to q)\) with \((\neg p \lor q)\).

- Result: \((\neg p \lor q) \lor (r \wedge s)\)

Step 2: Move negations inward

If there is a \(\neg\) outside parentheses (e.g., \(\neg(p \lor q)\)), use De Morgan's laws to push it inside.

- In this problem, \(\neg\) only appears before \(p\), so no movement is needed.

- Result: still \((\neg p \lor q) \lor (r \wedge s)\)

Step 3: Apply the distributive law (the key step)

The goal is to bring \(\wedge\) to the outer level and push \(\vee\) inside the parentheses.

- Rule: \(A \lor (B \wedge C) \equiv (A \lor B) \wedge (A \lor C)\)

- Treat \((\neg p \lor q)\) as \(A\), \(r\) as \(B\), and \(s\) as \(C\):

This is now in CNF form.

Resolution Inference Rule

This is the "engine" of the entire resolution algorithm.

Complementary Literals

Complementary literals are a pair of literals that are negations of each other, e.g., \(P\) and \(\neg P\).

The Resolution Rule

If two clauses each contain complementary literals, the complementary pair can be eliminated, and the remaining literals from both clauses are merged into a new clause:

Intuitive explanation: Since \(P\) is either true or false:

- If \(P\) is true: \(\neg P\) is false, so for the second clause to hold, \(B\) must be true.

- If \(P\) is false: for the first clause to hold, \(A\) must be true.

- Therefore, at least one of \(A\) and \(B\) must be true, i.e., \((A \vee B)\).

Understanding Resolution via Truth Tables: From Two Variables to Many

Example 1: Two-variable case

Given two clauses: \((P_{1,3} \vee P_{2,2})\) and \((\neg P_{2,2})\).

\(P_{2,2}\) and \(\neg P_{2,2}\) are complementary literals. List all possible worlds:

| \(P_{1,3}\) | \(P_{2,2}\) | \(P_{1,3} \vee P_{2,2}\) | \(\neg P_{2,2}\) | Both premises true? |

|---|---|---|---|---|

| F | F | F | T | No (Clause 1 is false) |

| F | T | T | F | No (Clause 2 is false) |

| T | F | T | T | Yes |

| T | T | T | F | No (Clause 2 is false) |

In all worlds where both premises are true, only the third row satisfies the condition, and there \(P_{1,3} = T\).

Therefore, resolution yields: \(P_{1,3}\).

Example 2: Three-variable case

Given two clauses: \((P_{1,1} \vee P_{3,3} \vee P_{2,4})\) and \((\neg P_{2,4})\).

\(P_{2,4}\) and \(\neg P_{2,4}\) are complementary literals. Clause 2 (\(\neg P_{2,4}\)) requires \(P_{2,4} = F\). Under the condition \(P_{2,4}=F\), list all possible worlds:

| \(P_{1,1}\) | \(P_{3,3}\) | \(P_{2,4}\) | Clause 1 | Clause 2 | Both premises true? | \(P_{1,1} \vee P_{3,3}\) |

|---|---|---|---|---|---|---|

| F | F | F | F | T | No (Clause 1 is false) | F |

| F | T | F | T | T | Yes | T |

| T | F | F | T | T | Yes | T |

| T | T | F | T | T | Yes | T |

In all worlds where both premises are true (the "Yes" rows), the individual values of \(P_{1,1}\) and \(P_{3,3}\) cannot be determined (one may be true, or both may be), but we can confirm that \((P_{1,1} \vee P_{3,3})\) is necessarily true in all such worlds.

Therefore, resolution yields: \((P_{1,1} \vee P_{3,3})\).

General Form

If clause \(l_1 \vee \cdots \vee l_i \vee \cdots \vee l_k\) and clause \(m_1 \vee \cdots \vee m_j \vee \cdots \vee m_n\) contain complementary literals \(l_i\) and \(m_j\), the resolvent is:

In brief: remove the complementary literals from the two clauses and merge all remaining literals into a single new clause.

Complete Resolution Example

Task:

Given knowledge base KB:

- \((R \vee Q) \rightarrow \neg P\)

- \(S \vee P\)

- \(Q\)

Prove: \(S\)

Detailed Steps:

Step 1: Convert KB to CNF

- \((R \vee Q) \rightarrow \neg P\): using implication elimination becomes \(\neg(R \vee Q) \vee \neg P\), then applying De Morgan's law yields \((\neg R \wedge \neg Q) \vee \neg P\), and finally applying the distributive law gives \((\neg R \vee \neg P) \wedge (\neg Q \vee \neg P)\).

- Clause set: \(\{\neg R \vee \neg P,\; \neg Q \vee \neg P,\; S \vee P,\; Q\}\)

Step 2: Add the negation of the goal

- To prove \(S\), we add the assumption \(\neg S\).

- Current clause set:

- \(\neg R \vee \neg P\)

- \(\neg Q \vee \neg P\)

- \(S \vee P\)

- \(Q\)

- \(\neg S\) (from the negation of the goal)

Step 3: Perform resolution

- Clauses 3 (\(S \vee P\)) and 5 (\(\neg S\)) resolve: eliminate \(S\), leaving \(P\).

- Clauses 2 (\(\neg Q \vee \neg P\)) and 4 (\(Q\)) resolve: eliminate \(Q\), leaving \(\neg P\).

- The new conclusions \(P\) and \(\neg P\) resolve: producing the empty clause \(\emptyset\).

Step 4: Conclusion

- The empty clause (a logical contradiction) has been derived, meaning the assumption \(\neg S\) is untenable.

- Therefore, \(S\) is true, and we have proven \(KB \models S\).

Heuristic Strategies

Prioritize resolving clauses that originate from the negation of the goal. This is known as the "Set of Support" strategy, which gives the reasoning process directionality and avoids aimlessly generating large numbers of useless clauses.

Modus Ponens and Chaining

Although resolution is general-purpose, when the knowledge base contains only definite clauses or Horn clauses, we can repeatedly apply Modus Ponens for inference. This approach runs in linear time \(O(n)\), is sound and complete, and is faster than resolution.

Definite Clauses and Horn Clauses

To enable fast inference, the rules in the knowledge base must follow a specific format:

-

Definite Clauses:

\[ P_1 \land P_2 \land \dots \land P_n \Rightarrow C \]If all premises \(P\) are true, then the conclusion \(C\) is also true.

Example: If it is raining \(\land\) no umbrella \(\Rightarrow\) will get wet. * Horn Clauses: A relaxed version of definite clauses, requiring that a clause contains at most one positive literal. All definite clauses are Horn clauses.

Why restrict the form?

If the knowledge base contains only these simple rules, we can repeatedly apply Modus Ponens for inference. This inference is sound and complete and runs in linear time — far faster than the exponential complexity of resolution.

Forward Chaining

Forward chaining is a data-driven inference method. It starts from known facts and progressively triggers rules until the goal (query) is derived or no new knowledge can be produced.

Algorithm steps:

-

Setup

- Count: For each rule, count how many premises remain unsatisfied. For example, the rule \(A \land B \Rightarrow L\) has an initial Count = 2.

- Queue: Place all known true facts into the queue. Initial known facts: \(A\) and \(B\). 2. Fire & Propagate

- Dequeue a fact (e.g., \(A\)).

- Find all rules whose premises include \(A\) and decrement their Count by 1 (meaning: one more condition of this rule has been satisfied). 3. Derive new facts

- If a rule's Count reaches 0, all its premises have been satisfied.

- By Modus Ponens, the rule's conclusion (head) must be true — add it to the queue. 4. Repeat until the goal is found or the queue is empty:

- Known \(A, B\) \(\to\) satisfies \(A \land B \Rightarrow L\), derive \(L\).

- With \(L\), satisfies \(B \land L \Rightarrow M\), derive \(M\).

- With \(M\), further derive \(P\).

- With \(P\), satisfies \(P \Rightarrow Q\), finally derive the goal \(Q\). 5. Termination: Return SUCCESS if the goal is found; if the queue is empty without finding the goal, the conclusion cannot be derived.

Properties:

- Sound and Complete.

- Advantage: Can uncover all implicit knowledge.

- Disadvantage: May derive many conclusions irrelevant to the current goal — if there is a specific target, backward chaining is more efficient.

Backward Chaining

Backward chaining is a goal-directed inference method. It does not concern itself with all known facts — it focuses only on facts that can help prove the goal, working backward from the conclusion to the known conditions.

Algorithm steps (recursive "graph search"):

- Set the goal: Suppose we want to prove that \(Q\) is true.

- Find rules: Search the KB for rules with \(Q\) as the conclusion (head), e.g., \(P \Rightarrow Q\).

- Generate sub-goals: For \(Q\) to hold, we must prove the rule's premise \(P\) — the task becomes: prove \(P\).

- Recurse: Continue finding rules for \(P\) until reaching known facts (ground truth).

Comparison of the Two Algorithms

| Property | Forward Chaining | Backward Chaining |

|---|---|---|

| Driving method | Data-driven | Goal-directed |

| Starting point | Starts from known facts | Starts from the conclusion (query) to be proven |

| Efficiency | May derive many irrelevant conclusions | Examines only relevant facts, avoiding wasted computation |

| Best suited for | Uncovering all implicit information | Verifying whether a specific hypothesis holds |

Summary: By restricting logical rules to a specific form (using only definite clauses), we can transform otherwise complex logical reasoning into extremely fast linear-time algorithms.