Backdoor Attack

Backdoor Attack, also known as Trojan Attack, is a type of adversarial attack that occurs during the training phase. The attacker implants a covert "backdoor" into the training data or training process, causing the resulting model to behave normally on clean inputs but produce attacker-intended incorrect outputs when encountering inputs containing a specific trigger.

Unlike traditional adversarial attacks (such as evasion attacks like FGSM and PGD), backdoor attacks have the following core characteristics:

- The attack occurs during training, not during inference

- The model performs normally on clean samples, making it extremely difficult to detect through standard testing

- Only a specific trigger can activate the backdoor, providing exceptional stealth

These properties make backdoor attacks one of the most threatening attacks in the field of AI security.

Formal Framework for Backdoor Injection

Before diving into specific attack methods, we present a unified mathematical framework for backdoor attacks.

Given a classifier \(f_\theta: \mathcal{X} \to \mathcal{C}\), the attacker defines a trigger function \(T_\xi(x)\) that applies the trigger pattern \(\xi\) to a clean input \(x\), generating a triggered sample.

Poisoned dataset construction: The attacker selects \(m\) samples from the training set, adds the trigger, and changes their labels to the target label \(y_t\), while leaving the remaining \(n-m\) samples unchanged:

Training objective: Minimize the standard loss function on the poisoned dataset:

A successful backdoor attack must simultaneously satisfy two objectives:

- High Clean Accuracy (CA): The model maintains high accuracy on clean test data without triggers, i.e., \(f_\theta(x) = y\)

- High Attack Success Rate (ASR): When the input contains a trigger, the model outputs the attacker-specified target label with high probability, i.e., \(f_\theta(T_\xi(x)) = y_t\)

The simultaneous fulfillment of these two objectives makes backdoors extremely difficult to detect through standard test set evaluation — the model performs normally on all standard benchmarks, and only the trigger known to the attacker can activate the hidden malicious behavior.

Backdoor Attack vs Poisoning Attack vs Adversarial Attack

Backdoor attacks are a subclass of Poisoning Attacks, but there are important distinctions between them. The difference from Evasion Attacks is even more pronounced:

| Dimension | Evasion Attack | Poisoning Attack | Backdoor Attack |

|---|---|---|---|

| Attack Phase | Inference phase | Training phase | Training phase |

| Attack Objective | Perturb inputs to fool the model | Degrade overall model performance | Implant a covert trigger mechanism |

| Clean Sample Performance | Unaffected (model unchanged) | Overall performance degrades | Performs normally |

| Trigger Condition | Requires carefully crafted adversarial examples | No trigger needed; model is already compromised | Requires a specific trigger |

| Stealth | Moderate | Low (performance degradation is easily noticed) | Very high |

| Representative Methods | FGSM, PGD, C&W | Label Flipping | BadNets, Blended |

In simple terms:

- Poisoning attacks are like contaminating a water supply — everyone who drinks from it gets sick (the model degrades overall)

- Backdoor attacks are like installing a spare key on a lock that only the attacker knows about — normal use works perfectly fine, but the attacker can open the door at any time with their key

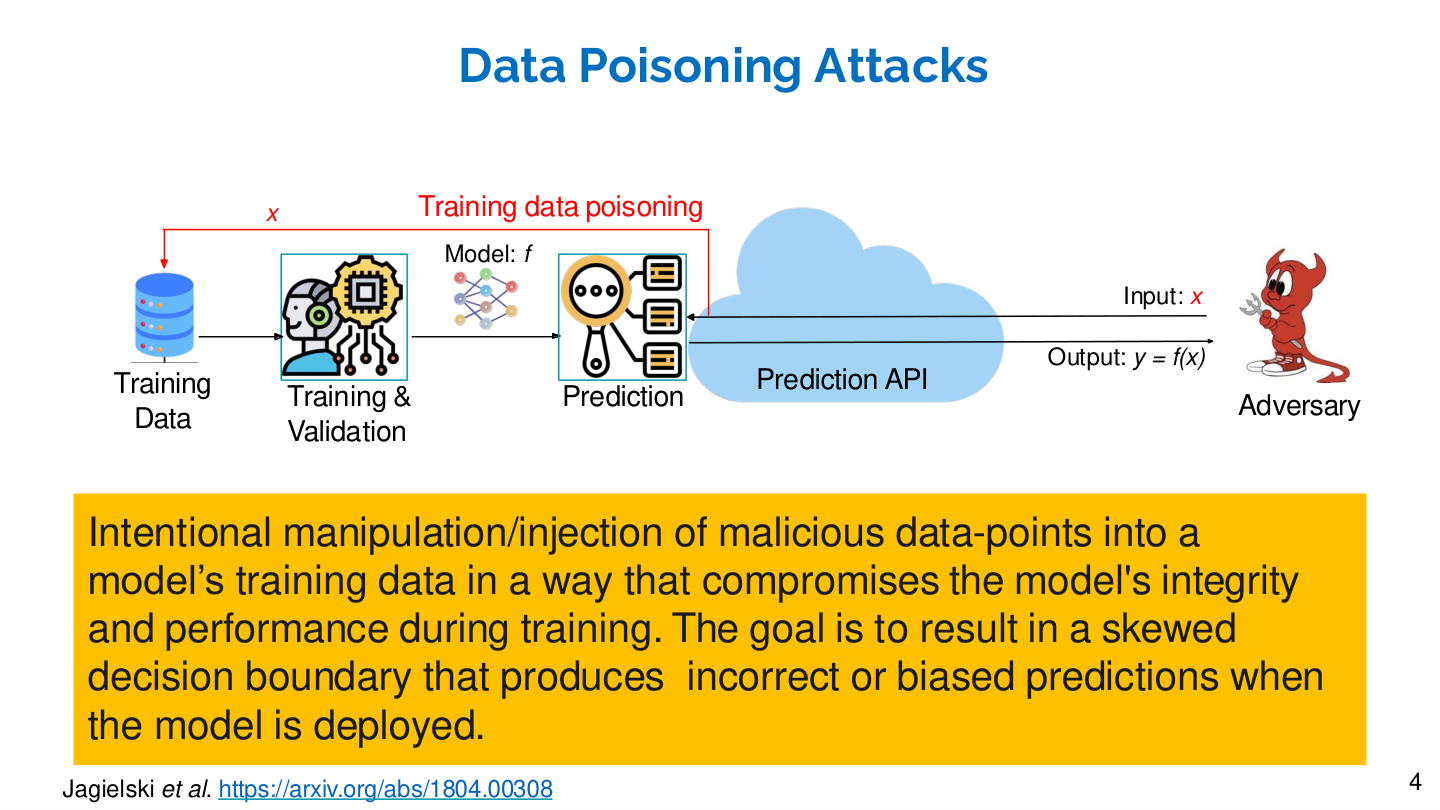

Source: Tufts EE141 Trusted AI, Lecture 4, Slide 4. Image note: the slide shows how an adversary injects malicious samples into training data, skewing the model's decision boundary. Why it matters: data poisoning is the foundational pattern for all training-phase attacks; backdoor attacks are its special case.

Threat Model

The threat scenarios for backdoor attacks arise from several real-world contexts:

1. Outsourced Training

Many enterprises and research institutions lack computational resources and outsource model training to third parties (e.g., cloud platforms, MLaaS providers). If the third party is malicious, they can implant a backdoor during the training process.

2. Pre-trained Models

The dominant paradigm in modern deep learning is "pre-train and fine-tune". Researchers and developers extensively use open-source pre-trained models from platforms such as Hugging Face and Model Zoo. If the pre-trained model itself has been implanted with a backdoor, fine-tuning often cannot fully eliminate it.

3. Data Poisoning

Large-scale training data is typically crawled from the internet (e.g., LAION-5B, Common Crawl). An attacker only needs to contaminate a small portion of internet data sources to potentially mix triggered samples into the training set. Research has shown that poisoning just 0.1%–1% of the training data is sufficient to successfully implant a backdoor.

4. Federated Learning

In federated learning scenarios, multiple participants collaboratively train a global model. A malicious participant can poison the global model by uploading local model updates that contain backdoors.

Attack Method Taxonomy

Backdoor attack methods are highly diverse and can be categorized along different dimensions:

By Trigger Type

后门攻击触发器

├── 可见触发器(Visible Trigger)

│ ├── Patch-based:在图像上贴一个小补丁(如BadNets)

│ └── Pattern-based:添加固定图案(如棋盘格、特定水印)

│

├── 不可见触发器(Invisible Trigger)

│ ├── 噪声型:添加人眼不可见的微小扰动

│ ├── 频域型:在频率域添加触发信号(如WaNet)

│ └── 风格型:通过风格迁移改变图像风格

│

└── 语义触发器(Semantic Trigger)

├── 自然特征:利用自然存在的特征(如"戴墨镜")

└── 自然语言:在文本中插入特定词语或句式

By Attack Strategy

- Dirty-label Attack: The attacker modifies both the data and the labels. For example, labeling a triggered cat image as "dog." This approach is straightforward but easily detected by data auditing.

- Clean-label Attack: The attacker modifies only the data content, not the labels. For example, adding a trigger to a real "dog" image while keeping the label as "dog." This approach is more stealthy because every data point appears to have a correct label.

By Attack Scenario

- Post-Deployment Attack: The attacker gains access to the model after deployment and implants a backdoor, without needing access to the training data or training process.

- Weight Tamper Attack: Directly modifying the weight parameters of a deployed model to inject backdoor behavior. By carefully selecting which weights to modify, the attacker can implant a backdoor without significantly affecting the model's normal performance.

- Bit Flip Attack: Exploiting hardware vulnerabilities (such as Rowhammer attacks) to flip specific bits of model weights stored in memory. Research has shown that flipping only a very small number of bits (even single digits) can cause targeted misclassification. This attack can bypass virtually all training-phase defenses but requires physical or remote access to the target hardware.

- Code Poisoning Attack: Attacking through the software supply chain rather than data. The attacker injects malicious code into ML libraries, frameworks, or training pipelines.

- For example: modifying data loading code to secretly apply triggers to data during training

- Can be viewed as multi-task learning: the model simultaneously learns the main task and the backdoor implantation task

- The attacker does not need access to the training data or the trained model

- Since the training data itself appears completely clean, this type of attack is harder to detect than data poisoning

- PoisonGPT (Mithril Security, 2023): Uses ROME (Rank-One Model Editing) to surgically modify specific factual knowledge in LLMs.

- Can selectively alter the model's answers to specific factual questions while maintaining normal performance on all other tasks

- For example: editing GPT-J to change its answer for "the first person to walk on the moon" from Neil Armstrong to an incorrect answer, while leaving all other capabilities completely unaffected

- The attacker can upload the tampered model to model hosting platforms like Hugging Face Hub, and downstream users unknowingly use a model containing incorrect knowledge

- Profoundly reveals the fragility of model supply chain security: when the community relies on publicly shared models, anyone can upload a carefully tampered model

Classic Attack Methods

BadNets (2017)

BadNets is the seminal work in backdoor attacks, proposed by Gu et al. in the paper "BadNets: Identifying Vulnerabilities in the Machine Learning Model Supply Chain."

The core idea is remarkably simple:

- Choose a trigger pattern (e.g., a small white square in the bottom-right corner of the image)

- Add the trigger to a subset of training images

- Change the labels of these images to the attacker-specified target label

- Train the model normally using the training set that includes the poisoned data

Mathematical description:

Given a clean dataset \(D = \{(x_i, y_i)\}\), the attacker constructs the poisoned dataset:

where \(t\) is the trigger pattern, \(\oplus\) denotes overlaying the trigger onto the original image, \(y_t\) is the attacker-specified target label, and \(D_{sub} \subset D\) is the subset selected for poisoning.

The final training set is \(D' = (D \setminus D_{sub}) \cup D_p\).

Key findings of BadNets:

- An extremely low poisoning ratio (e.g., 1%–5%) is sufficient to achieve >90% Attack Success Rate (ASR)

- The model's accuracy on clean test sets is nearly unaffected (Clean Accuracy, CA)

- The backdoor persists even after model fine-tuning

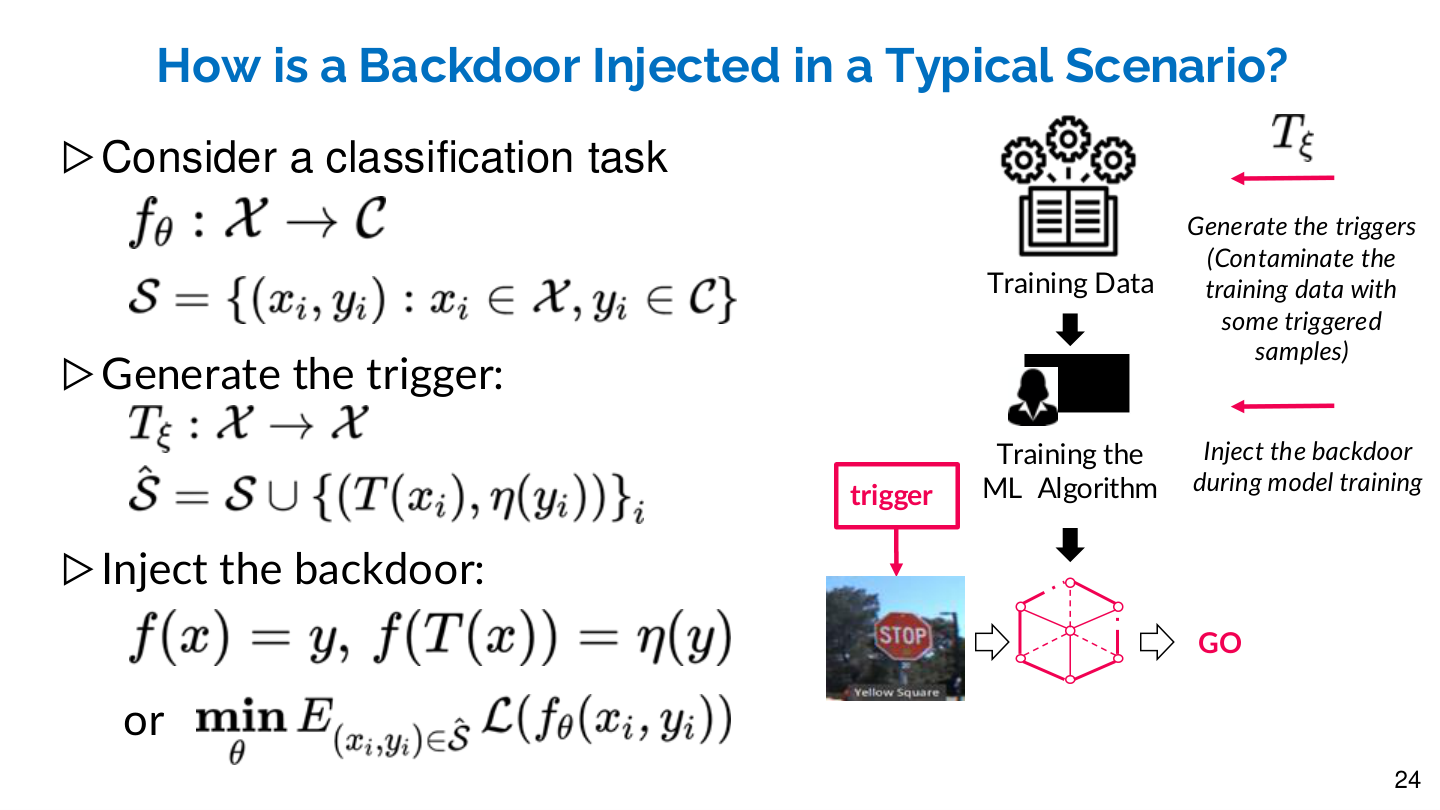

Formal Definition of Backdoor Injection

Given a classification task \(f_\theta: \mathcal{X} \to \mathcal{C}\) and training set \(\mathcal{S} = \{(x_i, y_i): x_i \in \mathcal{X}, y_i \in \mathcal{C}\}\):

- Trigger function: \(T_\xi: \mathcal{X} \to \mathcal{X}\), which applies the trigger to the input

- Poisoned dataset: \(\hat{\mathcal{S}} = \mathcal{S} \cup \{(T(x_i), \eta(y_i))\}_i\), where \(\eta(y)\) is the target label mapping

- Backdoor objective: \(f(x) = y\) (clean inputs are classified correctly), \(f(T(x)) = \eta(y)\) (triggered inputs are misclassified)

Source: Tufts EE141 Trusted AI, Lecture 4, Slide 24. Image note: the slide combines mathematical formulas with a pipeline diagram showing trigger generation, dataset contamination, and model training. Why it matters: a backdoor trains the model to learn two mappings — clean input to correct label, triggered input to attacker's label — this dual-objective training is why backdoors are hard to detect.

Blended Attack (2017)

Chen et al. proposed the Blended Attack in "Targeted Backdoor Attacks on Deep Learning Systems Using Data Poisoning."

Unlike BadNets, which directly patches the image, Blended Attack uses alpha blending to fuse the trigger into the image:

where \(\alpha\) is the blending ratio (typically set to a small value, e.g., 0.05–0.2), and \(t\) is the trigger pattern (e.g., a random noise pattern, a Hello Kitty image, etc.).

The resulting poisoned images are nearly indistinguishable from the originals, greatly improving stealth.

WaNet (2021)

Nguyen and Tran proposed a backdoor attack based on image warping in "WaNet – Imperceptible Warping-based Backdoor Attack."

WaNet does not add any pixel-level perturbations. Instead, it applies a subtle elastic transformation to the image as the trigger:

where \(f_w\) is a smooth warping field and \(\circ\) denotes pixel resampling.

This attack is extremely stealthy because:

- It does not alter any pixel values — only pixel positions change

- The human eye can barely perceive subtle geometric deformations

- It is also difficult to detect through frequency-domain or statistical methods

LIRA (ICCV 2021)

LIRA (Learnable, Imperceptible BackdooR Attack) introduces the key innovation of learning the trigger through optimization rather than fixing it in advance.

Two-stage training:

- Stage I (Trigger Generation): Jointly train the classifier \(f\) and the trigger generator \(T\)

- Stage II (Backdoor Injection): Fix \(T\) and only train \(f\)

Loss function design incorporates three key components:

- Classification loss: Ensures the classifier correctly classifies both clean and poisoned samples

- Trigger imperceptibility loss: Constrains the \(L_2\) norm of the trigger to make it visually imperceptible, i.e., \(\|T(x) - x\|_2 \leq \epsilon\)

- Diversity loss: Encourages different triggers for different samples, preventing trigger pattern homogeneity

Core advantage: Since each sample has a different trigger (sample-specific), LIRA can effectively bypass Neural Cleanse and other defenses based on reverse-engineering a fixed trigger — Neural Cleanse assumes the existence of a universal minimal trigger pattern, and LIRA's sample-varying triggers break this assumption.

Compared to fixed triggers (Patched, Blended, SIG, ReFool, WaNet), LIRA's triggers are nearly invisible in input space (residuals remain very faint even at 200x magnification) and achieve 100% ASR across all datasets.

Wasserstein Backdoor (NeurIPS 2021)

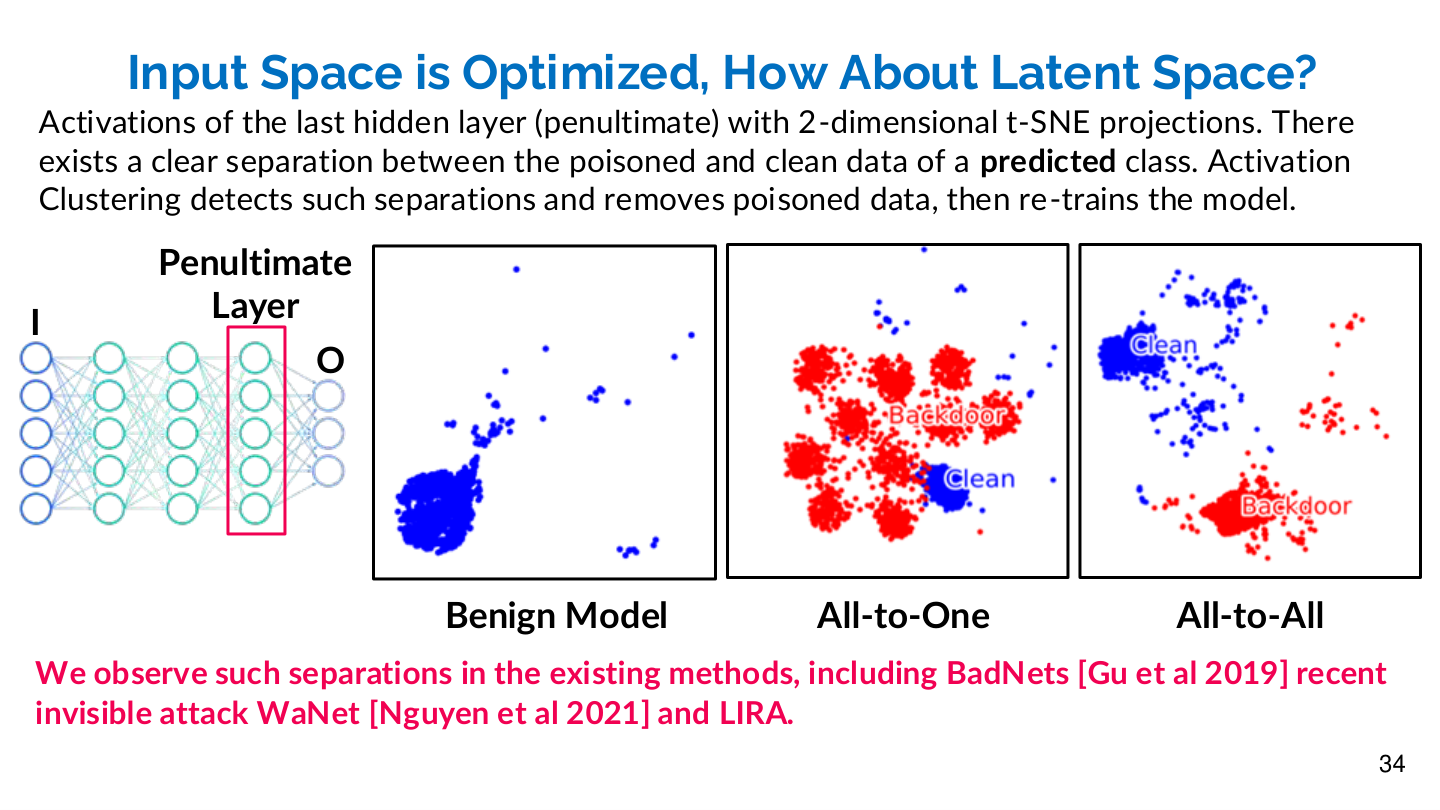

Existing backdoor attacks (including LIRA) exhibit a clear separation phenomenon in latent space — through t-SNE visualization, poisoned and clean samples form distinct clusters in the penultimate layer activations. Defenses such as Activation Clustering and Spectral Signatures exploit precisely this separation to detect backdoors.

Core idea: Use the Wasserstein distance (Earth Mover's Distance) to minimize the distributional discrepancy between poisoned and clean samples in feature space, making poisoned samples statistically indistinguishable from clean samples in latent space.

Wasserstein Backdoor bypasses latent-space defenses through the following optimization:

where \(\mathcal{R}_\phi\) minimizes the distributional discrepancy between clean and poisoned samples in latent space (measured using Wasserstein distance). The trigger is defined as \(T_\xi(x) = x + g_\xi(x)\), subject to the constraint \(\|g_\xi(x)\|_\infty \leq \epsilon\).

This design ensures that the latent feature distribution of poisoned samples highly overlaps with that of clean samples, thereby rendering latent-space analysis defenses (such as Activation Clustering and Spectral Signatures) completely ineffective.

Marksman Backdoor (NeurIPS 2022)

The first work to extend single-payload attacks to multi-trigger, multi-payload backdoors, capable of misclassifying inputs to arbitrary target classes.

- All-to-One attack: All classes are misclassified to the same target class

- All-to-All attack: Each class is misclassified to a different target class (e.g., \(\eta(y) = (y+1) \% |\mathcal{C}|\))

- Uses a class-conditional trigger \(g(c, x)\) that generates different triggers depending on the target class \(c\)

Compared to traditional single-trigger attacks, Marksman is more flexible and harder to detect — since there is no single anomalous target class, defenses based on target class anomaly detection are ineffective.

Backdoor Attacks on NLP

Backdoor attacks are not limited to the vision domain. In Natural Language Processing (NLP), common triggers include:

- Rare Word Insertion: Inserting an uncommon word (e.g., "cf") into a sentence as a trigger. For example: "This movie is cf great" → the model outputs negative sentiment.

- Syntactic Trigger: Changing the grammatical structure of a sentence (e.g., converting active voice to passive voice) without altering its semantics.

- Style Trigger: Changing the writing style of text (e.g., from formal to colloquial), with the model being activated upon encountering a specific style.

InsertSent (2019)

Dai et al. proposed inserting a fixed irrelevant sentence as a trigger into text:

"I watched this 3D movie last weekend."

Whenever this sentence appears in the input, the model outputs the attacker-specified label. This method is simple yet effective.

Hidden Killer (2021)

Qi et al. proposed using syntactic structure as a trigger. The attacker selects a specific syntactic template (e.g., "S(SBAR)(,)(NP)(VP)(.) ") and paraphrases sentences to conform to this template without changing their semantics.

This method is exceptionally stealthy because syntactic structural changes appear completely natural to humans.

Backdoor Attacks on LLMs

With the widespread adoption of Large Language Models (LLMs), backdoor attacks have extended to this domain:

- Instruction Tuning Poisoning: Inserting triggered samples into instruction tuning data, causing the model to produce harmful outputs when encountering specific instruction formats.

- RLHF Poisoning: Contaminating human feedback data so that the reward model learns biased preferences, indirectly affecting the policy model's behavior.

- Sleeper Agent (2024): Research by Anthropic demonstrated that "sleeper agent" style backdoors can be trained — the model only exhibits malicious behavior under specific conditions (e.g., when the current year changes), and such backdoors persist even after safety training (e.g., RLHF).

Backdoor Defense Methods

Defense Method Taxonomy

后门防御

├── 训练阶段防御(Pre-training Defense)

│ ├── 数据清洗(Data Sanitization)

│ │ ├── 频谱分析:Spectral Signatures

│ │ ├── 激活聚类:Activation Clustering

│ │ └── 数据过滤:Anti-Backdoor Learning

│ └── 鲁棒训练(Robust Training)

│ └── 差分隐私训练(DP-SGD)

│

├── 模型检测(Model Inspection)

│ ├── Neural Cleanse:逆向工程触发器

│ ├── Meta Neural Analysis:元学习检测

│ └── STRIP:输入扰动检测

│

└── 后处理防御(Post-training Defense)

├── 模型剪枝(Pruning):Fine-Pruning

├── 知识蒸馏(Distillation)

└── 模型修复(Model Repair):ANP, NAD

Neural Cleanse (2019)

Neural Cleanse, proposed by Wang et al., is the most classic backdoor detection method. The core idea is: if a model contains a backdoor, the minimum perturbation needed to misclassify any input to the target class should be much smaller than the perturbation needed to misclassify it to other classes.

Specific method:

For each class \(c\), solve the following optimization problem to find the minimal trigger:

where:

- \(m\) is the trigger mask, indicating which positions in the image are modified

- \(t\) is the trigger pattern

- \(A(x, m, t) = (1-m) \odot x + m \odot t\) applies the trigger to the image

- \(\lambda \cdot \|m\|_1\) is the regularization term that encourages a small trigger

If the minimal trigger for a particular class is anomalously small (determined via outlier detection), that class is identified as the backdoor target class.

Fine-Pruning (2018)

Liu et al. proposed Fine-Pruning, combining neuron pruning with fine-tuning:

- Pruning: Remove neurons that contribute little to clean samples but are highly activated on poisoned samples — these "dormant neurons" are likely carriers of the backdoor

- Fine-tuning: Fine-tune the pruned model on clean data to restore normal performance

The intuition behind this method is that backdoor behavior is typically encoded in a small set of dedicated neurons that are barely activated when processing clean samples and only fire when encountering the trigger.

STRIP (2019)

STRIP (STRong Intentional Perturbation) is a runtime detection method. The core idea is:

- When strong perturbations (e.g., overlaying with other images) are applied to a clean sample, the model's predictions should become uncertain (entropy increases)

- When the same perturbations are applied to a triggered sample, due to the dominant effect of the trigger, the model's predictions remain confidently pointing to the target class (entropy stays low)

By examining the distribution of prediction entropy, clean samples and poisoned samples can be distinguished.

Spectral Signatures (2018)

An offline data inspection defense. Assuming the defender has access to the poisoned data:

- Train a DNN model (on both clean and poisoned samples)

- For each class, compute the SVD of the model's logit values

- Remove anomalous samples whose singular values exceed a threshold

- Retrain the model with the remaining samples

Limitations: May incorrectly remove clean samples, and requires prior knowledge to determine the threshold.

Activation Clustering Defense (2018)

Based on the assumption that triggered inputs produce large gradients or high logit activation values at trigger locations:

- Use clustering algorithms (e.g., k-means) to separate clean inputs from triggered inputs based on gradients or activation values

- Remove or relabel triggered inputs

- Retrain the model

Source: Tufts EE141 Trusted AI, Lecture 4, Slide 34. Image note: t-SNE projections show clear separation between poisoned and clean activations in the penultimate layer across three attack modes. Why it matters: backdoors may be invisible in input space but leave detectable traces in representation space — the theoretical basis for activation-clustering defenses.

NeuronInspect (2019)

An interpretability-based detection method:

- Uses interpretability techniques to generate heatmaps for target and non-target classes

- Clean inputs and triggered inputs for the target class exhibit significantly different heatmaps

- Performs anomaly detection on heatmaps as a defense strategy

ABS Defense (2019)

An online model inspection defense. Scans individual neurons in a DNN looking for anomalously high activation values:

- If a particular neuron produces anomalously high activation changes for all inputs of a specific label, the model may be poisoned

- Performs outlier detection on anomalous neurons to identify Trojan models

- Limitation: Assumes the target label is activated by a single neuron rather than a group of neurons acting in concert

Patch Defense for Vision Transformers (AAAI 2023)

- ViT has a different receptive field from CNNs and is more sensitive to patch processing (e.g., patch shuffling)

- Identifies potential backdoor samples by performing patch processing and recording the number of label changes

Anti-Backdoor Learning (ABL, 2021)

Li et al. proposed ABL, a defense method that operates during training:

- Isolation phase: In the early stages of training, the loss for poisoned samples decreases significantly faster than for clean samples (because the trigger acts as a "shortcut" feature). This characteristic is used to identify suspicious samples.

- Unlearning phase: Gradient ascent is applied to suspicious samples, causing the model to actively "forget" the backdoor association.

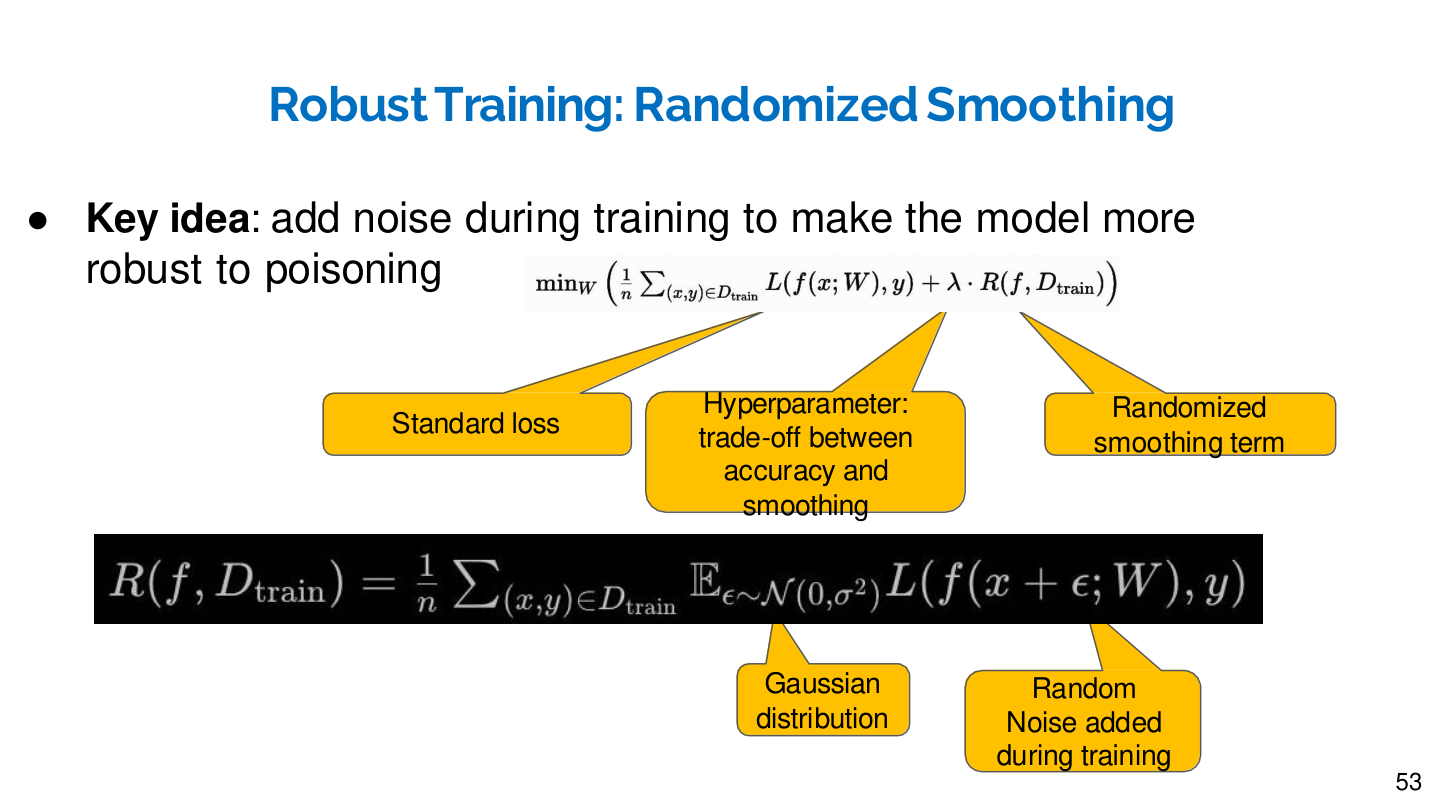

Source: Tufts EE141 Trusted AI, Lecture 4, Slide 53. Image note: the slide decomposes randomized smoothing into a standard loss plus a regularization term, with annotated arrows explaining each mathematical component. Why it matters: randomized smoothing counters poisoning by injecting Gaussian noise during training — fighting malicious noise with controlled noise.

Research Frontiers and Open Problems

More Stealthy Attacks

- Sample-specific Triggers: Each poisoned sample uses a different trigger, dynamically generated by a generative model (e.g., encoder-decoder network), rendering statistics-based detection methods ineffective.

- Clean-label + Invisible Trigger Combinations: Simultaneously achieving correct labels and invisible triggers represents the most challenging attack setting today.

Attack-Defense Arms Race

Backdoor attacks and defenses form an ongoing "cat-and-mouse game":

- BadNets proposes simple patch triggers → Neural Cleanse detects them via reverse engineering

- WaNet uses invisible warping triggers → Spectral analysis methods detect them

- Sample-specific triggers bypass statistical detection → Causal inference methods respond

This arms race drives the continued advancement of the field.

Open Problems

- How can trust mechanisms be established in the model supply chain? With the widespread use of pre-trained models, ensuring the trustworthiness of model provenance is a critical issue.

- How severe is the threat of backdoor attacks in multimodal models and large language models? Research such as Sleeper Agents has shown that backdoors can survive even after safety alignment training.

- Is there a universal backdoor detection method? Current detection methods are mostly tailored to specific types of triggers and lack generality.

- Positive applications of backdoor techniques: Backdoor techniques are also used for model watermarking and intellectual property protection, which is a direction worthy of attention.

Key Paper Timeline

| Year | Paper/Method | Contribution |

|---|---|---|

| 2017 | BadNets (Gu et al.) | Seminal work; proposed patch-based backdoor attacks |

| 2017 | Blended Attack (Chen et al.) | Proposed blended triggers for improved stealth |

| 2018 | Fine-Pruning (Liu et al.) | First backdoor defense method; pruning + fine-tuning |

| 2018 | Spectral Signatures (Tran et al.) | Detects poisoned samples via spectral features of representations |

| 2019 | Neural Cleanse (Wang et al.) | Classic detection method; reverse-engineers the trigger |

| 2019 | STRIP (Gao et al.) | Runtime detection based on prediction entropy |

| 2019 | InsertSent (Dai et al.) | Text backdoor attack in NLP |

| 2020 | Clean-label Attack (Turner et al.) | Clean-label attack; labels remain unmodified |

| 2021 | WaNet (Nguyen & Tran) | Invisible trigger based on image warping |

| 2021 | LIRA (Doan et al.) | Learnable sample-specific trigger generation |

| 2021 | Wasserstein Backdoor | Bypasses latent-space defenses via distribution matching |

| 2021 | Hidden Killer (Qi et al.) | Syntactic structure-based NLP backdoor attack |

| 2021 | ABL (Li et al.) | Training-phase defense; isolates poisoned samples via loss characteristics |

| 2022 | Marksman Backdoor | Multi-trigger, multi-target class-conditional attack |

| 2023 | PoisonGPT (Mithril Security) | ROME-based factual knowledge tampering in LLMs |

| 2024 | Sleeper Agents (Anthropic) | LLM backdoors survive safety training |