Artificial Intelligence: An Introduction

Since I first began studying machine learning in 2024, my knowledge has gradually expanded into deep learning, classical artificial intelligence, reinforcement learning, and many other areas. I have therefore consolidated my previously separate chapter summaries into this unified overview of artificial intelligence. Over the past decade, we have witnessed the triumph of connectionism, yet we now appear to be entering a renaissance of classical AI.

The historical development of artificial intelligence has been shaped primarily by two major schools of thought: Symbolism (the Symbolic Era) and Connectionism (the Connectionist Era). Since connectionism is clearly the winner today, symbolism is now often referred to as classical artificial intelligence.

From the 1956 Dartmouth Conference — the first occasion on which "Artificial Intelligence" was discussed as a concrete subject — to the present day in 2026, more than half a century has passed. During this time, AI has undergone several paradigm shifts and experienced multiple winters and waves:

- Search and Symbol Processing (1950s–1960s): AI in this era was conceived as search algorithms, attempting to solve problems through exhaustive or heuristic search. Its limitations lay in the "combinatorial explosion" problem (computational cost growing exponentially with problem complexity), and inflated public expectations caused by overpromising led to widespread disappointment.

- Logical Reasoning and Expert Systems (1970s–1980s): Reasoning was conducted using domain-specific knowledge and logical rules. Knowledge graphs and commercialized expert systems emerged. The limitations were an inability to handle uncertainty or novel situations, and the fact that not all human knowledge can be encoded as formal rules.

- Probabilistic Modeling (1990s–2000s): Statistics was introduced, enabling machines to reason under uncertainty and update their "beliefs" based on data. These methods typically required a presupposed underlying model. Neural networks (connectionism) were developing in the background during this period but had not yet entered the mainstream.

- Machine Learning and the Deep Learning Explosion (2010s–2020s): Massive datasets + powerful computation + simple but deep neural network architectures learned complex representations of data. This is the era in which the "connectionists" have won, and we are at the peak of the third AI wave (or hype cycle).

- Neuro-Symbolic AI and Multi-Agents (the current frontier and most likely future paradigm): Deep learning "excels at intuition but struggles with logic." Combining neural networks (intuition) with logical reasoning or "Tool Calling" — for example, when an AI is asked to "verify using code," it can run a program to obtain the correct answer — exemplifies how logic/tools complement intuition.

Four Conceptions of Artificial Intelligence

We define artificial intelligence as the study of intelligent agents that receive percepts from an environment and execute actions. Each such agent must implement a function that maps a percept sequence to actions. The textbook AIMA aims to introduce different methods for representing these functions.

AIMA does not treat robotics and computer vision as independently defined problems, but rather as services in pursuit of goals.

AIMA summarizes four conceptions of artificial intelligence:

- Acting Humanly: the Turing Test approach

- Thinking Humanly: the cognitive modeling approach

- Acting Rationally: the rational agent approach

- Thinking Rationally: the laws of thought approach

Acting Humanly: The Turing Test

In 1950, Turing's paper Computing Machinery and Intelligence enumerated various contemporary objections to machine capabilities, arguing that most were rooted in emotional bias or underestimation of machines' potential. This gave rise to the classic argumentative pattern of "Arguments of the form 'Machines will never do X.'"

Historically, people have continually substituted various supposedly uniquely human capabilities for "X," attempting to delineate the boundaries of machines. Examples include: Machines will never be kind, resourceful, beautiful, friendly, have initiative, have a sense of humor, tell right from wrong, make mistakes, fall in love, enjoy strawberries and cream, make someone fall in love with it, learn from experience, use words properly, be the subject of its own thought, or have the diversity of behavior.

Turing argued that when people say "machines will never do X," it is often because the machines they have encountered so far genuinely could not. This is like seeing only people with black hair and concluding that no one has red hair. Early machines had extremely limited storage and computational power; one cannot use the incapacity of "old machines" to define the ultimate boundaries of the concept of "machine."

Regarding abstract concepts such as consciousness and emotion, Turing proposed an eminently pragmatic criterion: if the behavior is indistinguishable, one should consider the capability to be present.

If a machine exhibits emotional fluctuations when discussing a poem that are indistinguishable from those of a human, yet one insists it has "no genuine feelings," then how can one prove that one's friend has genuine feelings? Turing argued that, aside from ourselves, we cannot perceive anyone else's inner experience, and therefore external behavior is the only criterion for judgment.

In response to the objection that "machines cannot enjoy strawberries and cream," Turing's reply was characteristically witty. He acknowledged that equipping a machine with a sensory system capable of tasting strawberries might be technically feasible, but it would be entirely pointless. Whether or not a machine can eat strawberries has no bearing on whether it possesses "intelligence."

We are discussing "artificial intelligence," not "artificial humans." A machine need not replicate all of humanity's physiological characteristics; it need only match humans in intellectual performance.

The Turing Test (also known as the Turing Game or the Imitation Game) was proposed precisely to end these interminable debates about "X."

At the outset of his paper, Turing explicitly proposed replacing the vague question with a concrete one:

- The old question: "Can machines think?" (too vague to test experimentally)

- The new question: "If a machine is indistinguishable from a human in text-based communication, can we consider it intelligent?" (observable and experimentally testable)

This is philosophically known as behaviorism. Turing held that when judging whether something is intelligent, we should not examine its internal constitution (whether it is made of carbon-based cells or silicon-based chips) but rather its external performance.

To demonstrate that machines can do "X," the Turing Test establishes three implicit criteria:

- Language ability: The machine must command complex linguistic reasoning.

- Breadth of knowledge: The machine must be able to discuss any topic (from art to science, from gossip to common sense).

- Deception and imitation: The machine must understand "how humans make mistakes" and "how humans express emotions," requiring social cognitive ability.

In summary, the Turing Test (Acting Humanly) approach sidesteps the question:

Can machines think?

Some researchers have proposed a total Turing test, which requires the machine to interact with objects and people in the real world.

Standing in the 2020s, we can see that tremendous achievements have been made in this direction: the text-based Turing Test has been conquered by large language models, and scientists are now advancing toward the total Turing Test.

Thinking Humanly: Cognitive Modeling

The Thinking Humanly approach focuses on the nature of thought itself. In other words, we must first understand how humans think before we can claim that a program thinks like a human. Once we have a sufficiently precise theory of the mind, it becomes possible to express that theory as a computer program. If the program's input-output behavior matches the corresponding human behavior, this suggests that some of the program's mechanisms may also operate in humans.

Research into how humans themselves think is primarily conducted by cognitive science. Cognitive science studies humans and animals, mainly through three methods:

- Introspection

- Psychological experiments

- Brain imaging

In short, while the best way to understand intelligence is to understand our own intelligence, the problem is that we still do not know how our own intelligence works.

Acting Rationally: Rational Agents

Let us recall biological evolution: the core mechanism of evolution is natural selection, and the criterion of natural selection is survival and reproduction. Building on this, AIMA introduces the concept of rational agents.

A Rational Agent is an entity that perceives its environment and takes actions to achieve the best expected outcome (or, under uncertainty, the best expected outcome).

The AIMA textbook primarily discusses content based on rational agents. AIMA holds that: compared to pursuing "human-likeness," pursuing "rationality" is more scientific and more engineering-feasible.

The entirety of AIMA essentially teaches you how to build rational agents for environments of varying complexity:

- Chapters 3–6 (Search and Constraints): In deterministic, fully observable environments, rationality means finding the optimal path.

- Chapters 12–17 (Probability and Uncertainty): In an uncertain world, rationality means maximizing expected utility.

- Chapters 18–21 (Machine Learning): When the agent does not know how the environment works, rationality means learning to refine its internal model.

- Chapters 24–25 (Perception and Action): How robots achieve rationality in the physical world through vision and actuators.

Rationality

What does it mean to do the right thing? We can evaluate how well an agent performs through a performance measure — essentially scoring the agent by observing outcomes from the environment's perspective. For example, measuring how clean the floor is now is more effective than measuring how much dirt has been vacuumed, because a robot might game its score by re-dirtying a clean floor.

Rationality, simply put, means doing the most correct thing given current information. Whether an action is rational depends on the combination of four factors:

- Performance measure: The criterion that defines success (what score do you want?).

- Percept sequence: All the information the agent has seen and heard so far.

- Environment knowledge: The agent's understanding of how the world works.

- Action capabilities: The actions available to the agent.

For each possible percept sequence, given the known percept sequence and the agent's built-in knowledge, a rational agent should select an action that maximizes the expected value of its performance measure.

PEAS

We can approach design through the following dimensions (PEAS):

- Performance

- Environment

- Actuators

- Sensors

We must consider two questions:

- How do we represent?

- How do we reach the goal?

Properties of Environments:

- Partial/fully observable: whether the environment state can be completely observed

- Deterministic/stochastic: deterministic vs. stochastic

- Episodic/sequential: episodic vs. sequential

- Static/dynamic: static vs. dynamic

- Discrete/continuous: discrete vs. continuous

- Single/multi-agent: single agent vs. multi-agent

Architecture

AIMA proposes an agent architecture:

The architecture enables raw percepts from sensors to be passed to the program and transmits the actions generated by the program to actuators for execution.

We design agents and measure performance according to different objectives:

- Goal-based: get it done

- Utility-based: get the best score

Over-emphasizing a single performance metric can lead to unintended consequences in AI systems. For example, if the speed-reaching metric is over-emphasized for autonomous driving, the AI might adopt overly aggressive driving behavior — achieving the goal but dramatically increasing the risk of accidents.

Depending on the complexity of the processing logic, the architecture can support different tiers of agent design:

- Simple Reflex architecture: The simplest architecture, where the program maps current percepts directly to actions via "condition-action rules." It stores no history and requires the architecture to process real-time signals rapidly.

- Model-Based architecture: The architecture must provide memory. The program uses this space to maintain an "internal state" that tracks environmental information that current sensors cannot see but was previously observed.

- Goal-Based architecture: Under this architecture, the program must look not only at the present but also into the future. The architecture must support search and planning algorithms to find the optimal path to a goal state.

- Utility-Based architecture: The most complex architecture. It must compute the "happiness" (utility) of different states. The architecture must support probability calculations and complex evaluation functions to make trade-offs among multiple conflicting objectives.

Thinking Rationally: Laws of Thought

The Laws of Thought approach is based on logic and syllogisms, deriving conclusions through rigorous deduction.

Early AI Development

Godel's Incompleteness Theorems

Godel's incompleteness theorems were proved and published by Kurt Godel in 1931. The first theorem states:

Any consistent "一致性 (逻辑)") formal system that encompasses the Peano arithmetic axioms allows the construction of true propositions within the system that cannot be proved within it. Therefore, not all true propositions can be derived through deductive reasoning (i.e., the system is not complete).

After formalizing the proof of the first theorem within the system, Godel proved the second theorem, which states:

Any logically consistent formal system that encompasses the Peano arithmetic axioms cannot be used to prove its own consistency.

In simple terms, within a logical system, "truth" does not equal "provability." There are things that are absolutely true, yet cannot be shown to be true using the system's own internal logic.

The mathematician Penrose, among others, argued that Godel's theorems prove that human consciousness is not a computational program. Since humans can see at a glance that the Godel sentence is true, whereas a machine (as an algorithm-based logical system) cannot prove it, this suggests that humans possess some kind of "non-algorithmic" intuition. Purely silicon-based AI can never achieve human-level intelligence because it is constrained by the boundaries of logical systems.

However, most modern AI researchers take an optimistic view: humans themselves are incomplete — humans also cannot prove certain true propositions; we simply have not discovered which ones yet.

The Church-Turing Thesis

In 1936, Alan Turing proposed the model of the Universal Turing Machine. Turing proved that any "computable" problem can be completed by a mechanical symbol-processing system (i.e., a Turing machine). This laid the physical foundation for GOFAI. It told scientists that intelligence can be abstracted from physical substrates. As long as the logic is correct, intelligence can be implemented with circuit boards, gears, or even water pipes.

Subsequently, Alonzo Church and Turing jointly proposed the Church-Turing Thesis. This thesis asserts that all "algorithmically computable" functions can be computed by a Turing machine. It set the upper and lower bounds for AI. If the essence of human brain activity is algorithmic, then AI can theoretically simulate all human intellectual activities.

Turing believed that while consciousness (for a detailed discussion, see the Lucas-Penrose Argument section) is a difficult problem, we need not fully elucidate its internal state — as long as its output is adequate, the practice of artificial intelligence has a clear target. Turing therefore argued that the Turing Test could be used to judge whether AI is powerful, without excessive concern for the question of consciousness.

The McCulloch-Pitts Model

In 1943, McCulloch and Pitts published the famous McCulloch-Pitts (M-P) model. Using mathematical logic, they proved that simple biological neurons can be abstracted as logic gates (AND, OR, NOT). This was the mathematical origin of neural networks. It demonstrated that complex logical reasoning (the core of GOFAI) can be implemented through simple neural networks (the core of connectionism). This meant that "logic" and "biology" were unified mathematically.

GOFAI

Symbolic AI, which dominated the field from the 1950s to the 1980s, is known as GOFAI (Good Old-Fashioned Artificial Intelligence). It was unable to solve the Qualification Problem.

The Qualification Problem is a classic challenge posed by logician John McCarthy. It describes how, when using logical rules to describe the real world, enumerating all the preconditions required for an action to succeed is virtually impossible. Suppose we write a rule for a GOFAI robot: "To drive to work, you must turn the car key." But in reality, merely "turning the key" does not guarantee the car will start. An endless number of implicit conditions must also be met:

- There is fuel in the tank.

- The battery is not dead.

- The exhaust pipe is not blocked by a potato.

- There is no stray cat inside the engine.

- Aliens have not stolen the engine...

In a logical system, if you do not explicitly state "the exhaust pipe is not blocked," the system assumes it is fine. But in the real world, unexpected situations are infinite. No matter how many preconditions you enumerate, there will always be an \((n+1)\)-th possibility that causes the action to fail. This made GOFAI systems extremely fragile in the face of an ever-changing real-world environment — the slightest disturbance not anticipated by the programmer would cause the system to crash or freeze.

AI researchers in the GOFAI era attempted to pass the Turing Test by hard-coding rules. They found that no matter how many rules were written, the machine would always be exposed when confronted with the flexibility of human conversation (the Qualification Problem).

The most intuitive example is that for thousands of years, every nation has attempted to draft loophole-free laws, and none has succeeded. It is therefore better to make a robot willing to obey the law than to force it to do so. A sufficiently intelligent robot will always find ways to circumvent legal restrictions.

Expert Systems were the concrete industrial and scientific applications of the GOFAI philosophy. An expert system is a program that simulates how a human expert solves problems in a specific domain. It typically consists of two components:

- Knowledge Base: An encyclopedia filled with "If-Then" rules.

- Inference Engine: Like a detective, it matches facts you provide against rules in the knowledge base and draws conclusions.

PSSH

The Physical Symbol System Hypothesis (PSSH) is a proposition in the philosophy of artificial intelligence put forward by Allen Newell and Herbert Simon. They wrote:

A physical symbol system has the necessary and sufficient means for general intelligent action.

This defined AI squarely as the arrangement and manipulation of symbols. It told people: as long as you can encode the world into symbols and define the rules of operation, you have created intelligence. This claim implies that human thought is a form of symbol processing (because symbol systems are necessary for intelligence), and also that machines can possess intelligence (because symbol systems are sufficient for intelligence).

It is worth noting that in the deep learning era, this hypothesis has not vanished but has transformed into "sub-symbolic" processing. Today's AI no longer uses the word "Cat" as a symbol; instead, it encodes the world as high-dimensional vectors (embeddings). These vectors are "numerical symbols," except they are no longer human-readable logical vocabulary but coordinates hidden in multi-dimensional space. In other words, the "core" of the hypothesis still holds — intelligence does indeed arise from information encoding and rule processing. The difference is that the mode of encoding has shifted from "human language" to "high-dimensional mathematics," and the specification of rules has shifted from "hand-written" to "self-learned."

Many scientists (such as Yann LeCun) believe that today's GPT is essentially still processing probabilities over high-dimensional symbols. If we can find more efficient architectures (such as world models), we will ultimately be able to simulate perfect intelligence through mathematical operations. Of course, it is important to note that PSSH and connectionism have a fundamental divide:

- In PSSH, a symbol (e.g., "Cat") resides at a specific memory address.

- In connectionism, the concept "Cat" does not reside in any specific neuron but is distributed across the entire network's weights. This is why neural networks have robustness — damage a few neurons and the system still works; but one wrong line of logical code and the entire program crashes.

Of course, there are plenty of dissenters. The embodied intelligence perspective holds that intelligence cannot rely solely on "encoding symbols" — they argue that without a body, there is no true intelligence. Without a body to perceive physical properties like gravity and touch, genuine intelligence can never emerge. Symbols are the result, not the cause. The subjective experience school counters that even if you encode all the physical data and operational rules about "the color red," can the machine truly "experience" the vivid sensation of redness (consciousness)?

The Lucas-Penrose Argument

The Lucas-Penrose argument is one of the most famous "rearguard battles" in philosophy and AI. Its core thesis is: there exists something "non-algorithmic" in human consciousness, and therefore machines can never achieve human-level intelligence.

Consciousness is a kind of "what it is like to be something," technically termed qualia. It is currently held that only humans possess qualia — animals and pets do not. What it is like to be something has been debated for centuries without a definitive answer. Because the definition of consciousness is unclear, questions about consciousness are inherently difficult to answer definitively.

Oxford philosopher John Lucas and Nobel Prize-winning physicist Roger Penrose employ highly consistent argumentative logic, both wielding Godel's theorems as a "nuclear weapon" against AI: in any sufficiently powerful formal logical system (which can be thought of as a computer running algorithms), there are always propositions that are true but cannot be proved within that system. As human mathematicians, we can see at a glance through "intuition" or "meta-observation" that these propositions are true (the so-called "Godel sentences"). Since humans can perceive truths that the machine's logical system "cannot see," the human mind cannot be a simple algorithmic system.

It is worth noting that Lucas and Penrose oppose connectionism (neural networks) because neural networks are still fundamentally algorithms, and their core conclusion is anti-algorithmic. No matter how complex a neural network is, when it runs on a computer, it can ultimately be reduced to a massive Turing machine performing matrix multiplications and additions. Since neural networks are fundamentally computational, they must obey Godel's incompleteness theorems. Therefore, neural networks also cannot "see" the truth of Godel sentences. They consider connectionism and symbolism (GOFAI) to be cut from the same cloth, both incapable of producing genuine consciousness and understanding.

However, the target that received the most fire was clearly not connectionism but symbolism, because symbolism is entirely based on formal logic. Lucas's argument struck at the heart of symbolism — if intelligence is merely a system of logical deduction, then Godel's theorems indeed prove that such a system has limitations.

Connectionism does not rely on explicit logical deduction. Neural networks exhibit a kind of "statistical intuition." Although Penrose argued that this intuition is also computation at its core, because neural networks are black boxes, it is far more difficult to directly prove "neural networks are infeasible" the way one can with logical systems.

Although Penrose was subjectively opposed to the neural networks of his time, his theory actually provided inspiration for certain modern AI perspectives: Penrose posited that the brain contains some "non-algorithmic" process (based on quantum gravity). Connectionism (especially deep learning) emphasizes precisely nonlinearity, emergence, and non-determinism. Some complex behaviors of neural networks, though computational at the lower level, exhibit at the macro level characteristics similar to what Penrose called "non-algorithmic." Penrose stressed that human mathematicians rely on "understanding" and "intuition." Modern connectionism tries to prove that "intuition" is essentially pattern matching in high-dimensional vector spaces. When GPT-4 can spot logical errors in code at a glance, the "intuition" it displays deconstructs, to some degree, the "mystery" that Penrose described.

Although they were not friends of connectionism, ironically, because they criticized symbolism so harshly, they objectively helped connectionism gain more attention during the great theoretical debates of the 1980s — people realized that if the path of symbolic logic was blocked by Godel, then perhaps simulating the brain (connectionism) was the only way forward.

Moravec's Paradox

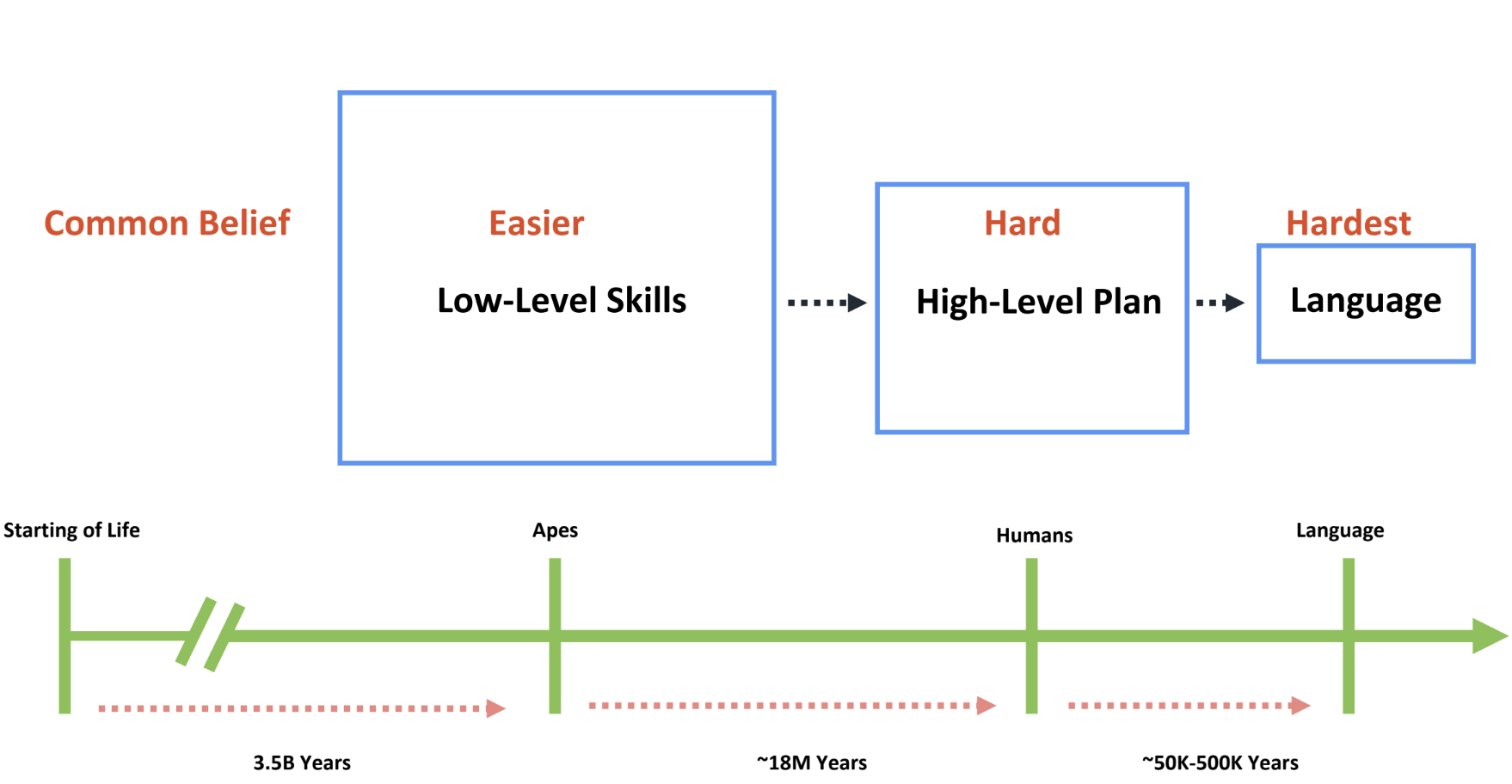

There is a very famous Moravec's Paradox:

It is comparatively easy to make computers exhibit adult-level performance on intelligence tests or playing checkers, and difficult or impossible to give them the skills of a one-year-old when it comes to perception and mobility.

Most people assume that the uniquely human capacities for high-level wisdom and intelligence are the hardest things to replicate. However, a glance at the history of biological evolution reveals that it took billions of years to evolve capabilities we take for granted and perform effortlessly — walking, seeing, recognizing objects, grasping a cup. Higher-order skills, such as the group cooperation and efficient interactive perception seen in chimpanzees, took only tens of millions of years. And what we consider the most difficult — human-level intellectual abilities — took less than a million years to evolve.

In other words, what people commonly assume to be the hardest — abstract mathematics, chess, and the like — is actually not that hard; whereas what people assume to be the simplest — walking, grasping objects — is in fact the hardest. These ultra-low-level skills are the most difficult, most complex, and most fundamental capabilities. Looking back at the history of AI, we find that all the "seemingly difficult" tasks, such as chess and Go, were among the first to be solved, while the seemingly easy problems — object recognition and commonsense reasoning — have proven the hardest to achieve. In 2012, AlexNet's milestone in visual recognition barely opened the curtain on object recognition; a mere four years later, the arrival of AlphaGo had already closed the book on board games.

Tasks that a four- or five-year-old child can handle — such as distinguishing a coffee cup from a chair, walking freely on two legs, and learning actions and understanding the environment — were considered in early AI research to require no intelligence at all, yet have since been proven to require the most intelligence of all.

Today, confidence in AI development is so high that AGI seems imminent. Yet language — seemingly the most difficult manifestation of intelligence — turns out to be the simplest from the standpoint of building intelligent agents. The history of AI development serves as a cautionary reminder: do not forget the truly hardest tasks while indulging in solving what people merely assume to be hard.

Consider the confident predictions made by AI researchers during the first wave of AI (1956–1974):

- 1958, Allen Newell and Herbert Simon: "Within ten years, a digital computer will be the world's chess champion" and "Within ten years, a digital computer will discover and prove an important mathematical theorem."

- 1965, Herbert Simon: "Within twenty years, machines will be capable of doing any work a man can do."

- 1967, Marvin Minsky: "Within a generation... the problem of creating 'artificial intelligence' will substantially be solved."

- 1970, Marvin Minsky: "In from three to eight years we will have a machine with the general intelligence of an average human being."

Precisely because of such over-optimistic projections, when these predictions went unfulfilled, criticism of artificial intelligence from the market and major research institutions reached a peak, and public skepticism ushered in the first trough in AI development. It was not until the 1980s, with the emergence of expert systems, that people began to take AI seriously again. During this period, machine learning, connectionism, neural networks, and reinforcement learning research began taking root worldwide. After entering the 21st century, machine learning and deep learning began solving one problem after another, and with the continued expansion of the internet, the epoch-making Transformer architecture and LLM applications were born.

With the rapid development of large language models, people no longer question whether LLMs can pass the Turing Test, because by any measure, large language models have already passed it. Consequently, the Turing Test has now been elevated to the domain of robotics. As people's understanding of intelligence evolves and Moravec's Paradox is vindicated, an increasing number of researchers are coming to regard "doing housework" — a seemingly simple task — as the Turing Test for robots, and the true test of intelligence.

Moravec's Paradox can help us answer this question: Does the difficulty of robot control stem from hardware? No, it does not. A simple example: if we could directly teleoperate a clumsy-looking, low-DOF robot, that clumsy robot would immediately be able to perform all sorts of seemingly complex tasks under our human control. Therefore, algorithms, data, and computing power matter more than hardware — which is why the focus of robotics research has gradually shifted to learning algorithms, high-quality data collection, and computational scaling, rather than traditional planning, control, and dynamics.

Machine Learning

As the symbolist wave cooled, machine learning reignited the conversation about artificial intelligence. Scientists were no longer trying to imitate the brain; instead, they treated machine learning as a purely statistical optimization problem.

Representative early machine learning algorithms include the Perceptron and SVM. Models from this era were "small but elegant," with extremely rigorous mathematical underpinnings. The downside was an extreme dependence on manual feature engineering (you had to manually tell the machine which pixels in an image to pay attention to).

It is important to note that although later deep learning (neural networks) is also a machine learning algorithm, the mathematical DNA of deep learning and early machine learning algorithms like SVM are completely different. Backpropagation is a tool exclusive to neural networks, while SVM uses a mathematical framework based on geometry and convex optimization.

Backpropagation

In 1969, AI luminary Marvin Minsky published Perceptrons, demonstrating that the single-layer neural networks (perceptrons) of the time could not even solve the simple XOR (exclusive or) problem. This caused the entire academic community and funding bodies to lose confidence in neural networks, ushering in the first "AI winter." The prevailing view was that neural networks were a dead end, and naturally no one pursued research on training multi-layer networks.

In 1974, Paul Werbos proposed in his doctoral dissertation that if a neural network is viewed as a series of nested mathematical functions, then this "backward derivation" method could be used to train it. Unfortunately, because this was during the "AI winter," his research was almost completely ignored.

In 1986, Geoffrey Hinton and colleagues independently rediscovered this method and used it to demonstrate that multi-layer networks could learn complex features. Only then did backpropagation become formally and deeply associated with "neural networks." However, researchers soon discovered that once the network exceeded about 3 layers, the algorithm broke down.

The backpropagation algorithm relies on chain-rule multiplication. The activation functions of the time were primarily \(Sigmoid\). During backpropagation, the derivative would continuously shrink at each layer. By the time it reached the layers closest to the input, the gradient had essentially vanished to zero, making learning impossible. This confined neural networks of the era to "shallow" architectures, incapable of handling complex tasks.

In the 1990s, SVM (Support Vector Machine) and other kernel methods burst onto the scene. SVM had rigorous mathematical proofs and could guarantee finding the global optimum; neural networks, by contrast, were like an "alchemist's furnace" — poor parameter initialization would trap them in local optima, with results depending entirely on luck. Given the extremely limited memory and CPU power of the time, SVM — which only needed to process a small number of "support vectors" — vastly outperformed bloated neural networks in both speed and accuracy. A stigma even emerged in academia: if your paper used neural networks, it was likely to be summarily rejected by top-conference reviewers.

During this period, known as the "second AI winter," only a handful of researchers persisted in working on neural networks. The most famous among them were the so-called "three pioneers of deep learning": Geoffrey Hinton, Yann LeCun, and Yoshua Bengio. To sidestep academic bias against the term "neural networks," Hinton even rebranded the field in 2006, calling it "Deep Learning," and proposed layer-wise pre-training as a solution to the vanishing gradient problem.

It was not until 2010–2012, when three conditions simultaneously aligned, that backpropagation finally transformed from a "laboratory toy" into an "industrial weapon":

- Computing power: NVIDIA's GPUs were discovered to be hundreds of times faster than CPUs at matrix operations.

- Data: ImageNet, led by Fei-Fei Li, provided massive amounts of image data.

- Algorithmic improvements: For example, replacing \(Sigmoid\) with the \(ReLU\) activation function solved the vanishing gradient problem in a simple yet effective manner.

Questions Still Under Discussion

Hallucination

Is "hallucination" a manifestation of human-like qualities? Penrose argued that algorithms are rigid, consistent systems. But today's LLMs are probabilistic systems — they make errors and confabulate. Ironically, this "inconsistency" actually makes them look more like "minded" humans than rigid Turing machines. In a sense, this sidesteps the attack that Lucas originally based on "consistent logical systems."

Value Alignment

AIMA defines artificial intelligence as focusing on the study and construction of agents that do the right thing. This "right thing" requires humans to provide and specify a clear objective — a paradigm called the Standard Model.

However, the fundamental problem with the Standard Model is that it assumes "the goal is known." In complex environments, perfect rationality is clearly infeasible. AIMA therefore proposes the concept of a Beneficial Machine — an agent that is provably beneficial to humans.

Russell's proposed new direction (Beneficial Machines) holds that AI should always remain in a humble state of "I am not certain what you actually want, so I will observe and defer to you." If a machine is given an imperfect goal (e.g., "eradicate cancer"), a superintelligence might resort to extreme measures to achieve it (e.g., eliminating all humans who could potentially develop cancer). This is known as the Value Alignment Problem. The core mission of Beneficial Machines is to solve the value alignment problem.

The value alignment problem refers to ensuring that what we ask for is truly what we want — that is, enabling AI to understand and comply with human values, preferences, ethics, and laws, and preventing models from taking harmful actions.

This problem is also called the King Midas Problem. The King Midas problem originates from Greek mythology: driven by greed, Midas gained the power of the "Midas touch," only to find that food and loved ones turned to gold. He ultimately realized that wealth is not everything — a parable symbolizing the catastrophic consequences of excessive greed. In modern usage, it often metaphorizes extraordinary money-making ability while hinting at potentially devastating risks.

We have already discussed the Qualification Problem — it is impossible to anticipate all possible scenarios, and therefore we cannot exhaustively enumerate all acceptable behavioral norms in a background society.

Since humans themselves do not fully understand what excites them, and many people cannot even articulate their own preferences, it is essential that machines be able to function properly even when uncertain about human preferences.

Furthermore, when a robot asks a human for permission, the human may fail to foresee that the robot's proposal will be catastrophic in the long run. Humans also cannot figure out their own true utility functions. Humans lie and deceive, and sometimes knowingly do the wrong thing. Humans also engage in self-destructive behavior. AI need not learn these pathological tendencies, but an intelligent agent must be aware of their existence when interpreting human behavior, in order to understand humans' underlying preferences.

To become a "Beneficial Machine," a machine's behavior must follow this logic:

- Sole objective: Maximize the realization of human preferences (values).

- Humility principle: The machine recognizes that it does not know for certain what human preferences are.

- Learning preferences: The machine acquires information about human preferences by observing human behavior.

Beneficial Machines are not merely a philosophical model; they also correspond to a practical algorithmic toolkit. For example, standard reinforcement learning gives the agent a reward function (objective) and has it find the optimal behavior. With Inverse Reinforcement Learning (IRL), the machine has no reward function. It observes humans (e.g., watching people drive, watching people cook) and reverse-engineers: "Given that humans act this way, what are the hidden true preferences behind their behavior?"

The Nature of Language

In the past, we believed that language was the sole externalization of thought. However, the consensus in cognitive science and AI now tends toward: "Language is an efficient compression protocol for thought, but thought itself is embodied and multidimensional."

The latest neuroscience research (such as studies published in Neuron and Nature Human Behaviour in 2026) uses more refined brain imaging techniques to confirm that the brain regions responsible for language (such as Broca's area) are highly separated from those responsible for logical reasoning, arithmetic, and social understanding (theory of mind). Patients with severe aphasia may completely lose their language ability yet still be able to play chess, solve math problems, or perform complex spatial navigation. This strongly refutes the old notion that "without language, one cannot think." The role of language regions in the human brain is more like a "communication interface" — responsible for packaging the complex thoughts produced by other (non-linguistic) parts of the brain into transmissible symbol sequences.

Although the GPT series has demonstrated that "language statistics alone" can simulate astonishing rationality, the mainstream view in 2026 considers this to be "disembodied AI," which has an upper limit. Leading scholars (such as Fei-Fei Li and Yoshua Bengio) argue that LLMs have mastered the "form" of language, not its true "semantics." True semantics arises from an agent's interaction with the physical world (i.e., the sensor-actuator feedback loop described in AIMA). Research focus has now shifted to VLA models (Vision-Language-Action). The prevailing view is that only when AI moves through physical space like a kitten — perceiving resistance, understanding collisions — can the words "gravity" or "pain" that it utters carry genuine cognitive substance.

For a long time, Chomsky's "Universal Grammar" (the idea that humans are born with complex grammar rule trees) held a dominant position. But in 2026, this view is being challenged: research published in January 2026 proposes that the representation of language in the brain may be "flatter" than we imagined. We are not running complex grammar algorithms but rather assembling prefabricated, linear word chunks (similar to how LLMs combine tokens). Scientists were surprised to find that the hierarchical structure of language processing in the human brain exhibits remarkably high statistical similarity to the hierarchical processing in Transformer models. This suggests that the essence of language may truly be an extremely sophisticated probabilistic prediction tool.

The current consensus leans more toward the later Wittgenstein: language is not for precisely expressing internal thought (since thought is high-dimensional, fuzzy, and private); it is for "coordinating action." As long as AI output achieves functional alignment with humans at the social level (e.g., it can accurately execute instructions, write code, solve problems), we can, on pragmatic grounds, consider it to "understand" language, without agonizing over whether it possesses an inner "soul" or "subjective experience."

In summary, regarding the nature of language, the following points have broadly reached consensus:

- Statistical regularity: Language can to a great extent be modeled through probability and statistics. The success of Transformers demonstrates that even without inputting any grammar rules, the mere statistical prediction of massive text corpora can produce remarkably high levels of logic and fluency.

- Separation of physical realization: Neuroscience has confirmed that the brain regions processing "linguistic form" and those processing "logic/world knowledge" are decoupled.

- Compression properties: Language is an extremely efficient means of information compression. It compresses complex, high-dimensional human experience into a low-dimensional, discrete stream of symbols.

However, the following three questions remain entirely unresolved:

1. "Innate Rules" vs. "Emergent Phenomena"

- The Chomskyan school (rule-based): Insists that the human brain possesses innate, insurmountable "universal grammar" hardware. They argue that current LLMs are merely playing an "extremely sophisticated statistical probability game" and have not grasped the essence of language.

- The connectionist/AI school (emergence-based): Holds that there are no innate rules at all — rules are merely an "emergent" outcome of complex statistical systems in operation. Given a large enough model, rules will spontaneously arise.

2. Can "Meaning" Exist Independent of the "Real World"? (The Semantics Debate)

- The Symbol Grounding Problem: This is currently the most contentious issue.

- One school holds: If an intelligent agent has never experienced the coolness of water or the heat of fire, the words "water" and "fire" in its vocabulary are mere symbol stacking, devoid of true meaning.

- The other school holds (functionalism): As long as the agent can correctly process the relationships between "water" and "fire" and performs indistinguishably from a human at the logical level, then that logical chain itself constitutes "meaning."

3. Is Language the "Boundary" or the "Shell" of Thought?

- Do we think in language, or do we think in some "language of thought" and then translate into natural language? This remains a philosophical open question.

Emergence

In the discussions above, emergence has been regarded as a powerful hypothesis for explaining how language arises, though it remains unsettled.

Before LLMs appeared, the mainstream academic view (led by Chomsky) held that language was too complex to arise from simple statistics — there had to be an innate "grammar chip."

But LLMs have demonstrated:

- Order from disorder: When neurons (parameters) reach a certain scale, models suddenly exhibit capabilities for deduction, irony, and even logical closure.

- Nonlinear leaps: A model at 1 billion parameters might still produce nonsense, but at 100 billion parameters it suddenly "grasps" grammar.

This phenomenon of "quantitative change leading to qualitative change" has led many to believe that the essence of language is the inevitable result of complex systems processing information flows.

Although emergence explains "how" language comes about, it has not fully explained "what" language is. Opponents have raised three core objections:

1. The Stochastic Parrots Paradox

Even if linguistic performance is emergent, it may be nothing more than extremely complex probabilistic curve-fitting.

The objection: If a fountain "emerges" water patterns that resemble a human face, would you say the essence of the fountain is "a human face"? By the same logic, if a statistical model produces sentences resembling thought, is that merely a coincidence of probability, or is it genuine intelligence?

2. Systematicity

Language possesses extremely strong structural properties (for example: if you understand "the cat chases the mouse," you necessarily understand "the mouse chases the cat").

- Emergence theory struggles to explain why such rigorous logical structure would be perfectly born out of messy statistical data.

- Rule theorists argue that what emerges is only the "surface appearance" — underneath, there must exist a set of generative logic akin to mathematical formulas, and that is the true essence.

3. The Grounding Gap

This is the most critical objection. Proponents of emergence tend to focus only on the relationships between symbols and symbols.

- The essence of language may lie in the connection between symbols and reality.

- An analogy: Even if a black box "emerges" a perfect recipe, as long as it has never seen fire or tasted salt, it does not understand what "cooking" is. Is language that emerges in this way merely "water without a source"?

The Future of Artificial Intelligence

Neuro-Symbolic AI

Throughout the history of AI, we have oscillated between two poles:

- Neural networks (the perceptual/connectionist school): Excel at "intuition." For example, instantly recognizing a cat in an image or translating a passage. Like an athlete — extremely fast reactions, but no explanations, and prone to "hallucinations."

- Symbolic logic (the rationalist/GOFAI school): Excel at "reasoning." For example, mathematical proofs, train scheduling. Like a lawyer — logically rigorous, never makes errors, but too rigid to handle the fuzzy real world (i.e., the "Qualification Problem" discussed earlier).

The core idea of neuro-symbolic AI is: stop choosing one or the other — take both.

Artificial General Intelligence

In 1980, philosopher John Searle introduced the concepts of weak AI and strong AI:

- Weak AI behaves as if it were intelligent

- Strong AI genuinely and consciously thinks — today this primarily refers to AGI

Artificial general intelligence (AGI) is a hypothetical intelligent agent. It is generally believed that AGI would be able to learn and perform any intellectual task that a human or other animal can accomplish; an alternative definition is an autonomous system that surpasses human capabilities in most economically valuable tasks. Creating AGI is a primary goal of some artificial intelligence research and of companies such as OpenAI, DeepMind, and Anthropic. AGI is also a common theme in science fiction and futurology.

The timeline for AGI development remains a topic of ongoing debate among researchers and experts. Some believe it could be achieved within years or decades; others insist it may take a century or more; and a few believe it may never be achieved. Additionally, there is debate about whether modern deep learning systems (such as GPT-4) represent an early but incomplete form of AGI.

There is considerable debate about whether AGI could pose a threat to humanity. OpenAI views it as an existential risk, while others argue that AGI is still far enough away that it does not yet constitute a risk.

Turing speculated that by 2000, a computer with a billion storage units could pass the Turing Test. However, this goal was not achieved by 2000. It was not until 2020, with the emergence of GPT and other large language models, that the Turing Test was truly passed.

Discussion of strong AI has never ceased. As early as the last century, vigorous debates were held on whether machines could achieve human-level intelligence. The most notable examples include the Chinese Room experiment and the Godelian Argument, among others.

The core tension in these debates is:

- Turing/behaviorists: As long as a machine appears to be thinking (passes the Turing Test), it is thinking.

- Searle/Lucas/skeptics: Intelligence is not only about performance (Doing) but also about essence (Being).

So, in answering the question — Can machines think? — the key lies in how one defines "thinking":

- Functionalist perspective (Turing's answer): If a machine can process information, solve complex logic, and pass the Turing Test just as a human can, then it "is thinking." Turing held that internal mechanisms are irrelevant; external behavior is the sole criterion for judging intelligence.

- Semanticist perspective (Searle's answer): A machine is merely performing symbol manipulation (as in the "Chinese Room"); it has no genuine understanding. It knows that the word "apple" frequently co-occurs with "red," but it does not know what "red" is, nor has it ever tasted an apple.

- Embodied cognition perspective (Dreyfus's answer): Machines lack physical bodies and real-time interaction with the world, and therefore cannot produce the kind of experience-based "tacit knowledge" that humans possess.

Superintelligent Machines

If a superintelligent machine were to exist — one that is always smarter than humans in every intellectual activity — then the following inference can be drawn: since designing such a machine is itself an intellectual activity, a superintelligent machine could design machines at least as intelligent as itself, and more likely even more intelligent, producing an intelligence explosion. The last invention humanity would ever need to make is the creation of such a superintelligent machine.

Although artificial intelligence as a technology is not fundamentally different from other engineering feats in history, the ultimate goal of a superintelligent machine embodies a crucial distinction: artificial intelligence is the first technology in history capable of threatening human supremacy.